Alex Zhuang

commited on

Commit

·

15d8d07

1

Parent(s):

4f2b187

new model

Browse files- README.md +0 -131

- added_tokens.json +0 -10

- model-00001-of-00002.safetensors +0 -3

- model-00002-of-00002.safetensors +0 -3

- pytorch_model-00001-of-00002.bin +0 -3

- pytorch_model-00002-of-00002.bin +0 -3

- pytorch_model.bin.index.json +0 -298

README.md

DELETED

|

@@ -1,131 +0,0 @@

|

|

| 1 |

-

---

|

| 2 |

-

license: mit

|

| 3 |

-

datasets:

|

| 4 |

-

- TIGER-Lab/SKGInstruct

|

| 5 |

-

language:

|

| 6 |

-

- en

|

| 7 |

-

---

|

| 8 |

-

# 🏗️ StructLM: Towards Building Generalist Models for Structured Knowledge Grounding

|

| 9 |

-

|

| 10 |

-

<span style="color:red">This checkpoing seems to have some issue, please use https://huggingface.co/TIGER-Lab/StructLM-7B-Mistral instead.</span>

|

| 11 |

-

|

| 12 |

-

Project Page: [https://tiger-ai-lab.github.io/StructLM/](https://tiger-ai-lab.github.io/StructLM/)

|

| 13 |

-

|

| 14 |

-

Paper: [https://arxiv.org/pdf/2402.16671.pdf](https://arxiv.org/pdf/2402.16671.pdf)

|

| 15 |

-

|

| 16 |

-

Code: [https://github.com/TIGER-AI-Lab/StructLM](https://github.com/TIGER-AI-Lab/StructLM)

|

| 17 |

-

|

| 18 |

-

|

| 19 |

-

|

| 20 |

-

|

| 21 |

-

## Introduction

|

| 22 |

-

StructLM, is a series of open-source large language models (LLMs) finetuned for structured knowledge grounding (SKG) tasks. We release 3 models:

|

| 23 |

-

|

| 24 |

-

7B | [StructLM-7B](https://huggingface.co/TIGER-Lab/StructLM-7B)

|

| 25 |

-

|

| 26 |

-

13B | [StructLM-13B](https://huggingface.co/TIGER-Lab/StructLM-13B)

|

| 27 |

-

|

| 28 |

-

34B | [StructLM-34B](https://huggingface.co/TIGER-Lab/StructLM-34B)

|

| 29 |

-

|

| 30 |

-

|

| 31 |

-

## Training Data

|

| 32 |

-

These models are trained on 🤗 [SKGInstruct Dataset](https://huggingface.co/datasets/TIGER-Lab/SKGInstruct), an instruction-tuning dataset containing mixture of 19 SKG tasks combined with 🤗 [SlimOrca](https://huggingface.co/datasets/Open-Orca/SlimOrca). Check out the dataset card for more details.

|

| 33 |

-

|

| 34 |

-

|

| 35 |

-

## Training Procedure

|

| 36 |

-

The models are fine-tuned with CodeLlama-Instruct-hf models as base models. Each model is trained for 3 epochs, and the best checkpoint is selected.

|

| 37 |

-

|

| 38 |

-

## Evaluation

|

| 39 |

-

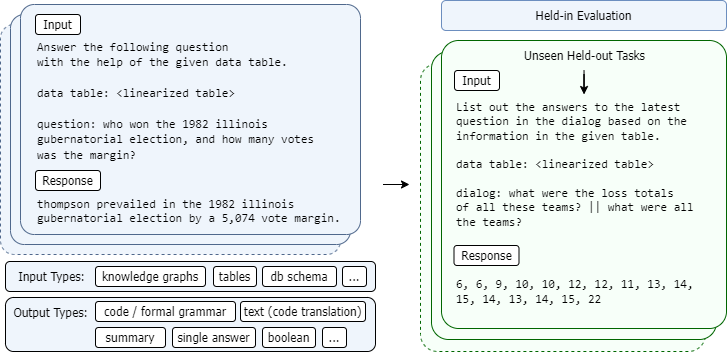

Here are a subset of model evaluation results:

|

| 40 |

-

|

| 41 |

-

### Held in

|

| 42 |

-

|

| 43 |

-

| **Model** | **ToTTo** | **GrailQA** | **CompWebQ** | **MMQA** | **Feverous** | **Spider** | **TabFact** | **Dart** |

|

| 44 |

-

|-----------------------|--------------|----------|----------|----------|----------|----------|----------|----------|

|

| 45 |

-

| **StructLM-7B** | 49.4 | 80.4 | 78.3 | 85.2 | 84.4 | 72.4 | 80.8 | 62.2 |

|

| 46 |

-

| **StructLM-13B** | 49.3 | 79.2 | 80.4 | 86.0 | 85.0 | 74.1 | 84.7 | 61.4 |

|

| 47 |

-

| **StructLM-34B** | 50.2 | 82.2 | 81.9 | 88.1 | 85.7 | 74.6 | 86.6 | 61.8 |

|

| 48 |

-

|

| 49 |

-

|

| 50 |

-

### Held out

|

| 51 |

-

| **Model** | **BIRD** | **InfoTabs** | **FinQA** | **SQA** |

|

| 52 |

-

|-----------------------|--------------|----------|----------|----------|

|

| 53 |

-

| **StructLM-7B** | 22.3 | 55.3 | 27.3 | 49.7 |

|

| 54 |

-

| **StructLM-13B** | 22.8 | 58.1 | 25.6 | 36.1 |

|

| 55 |

-

| **StructLM-34B** | 24.7 | 61.8 | 36.2 | 44.2 |

|

| 56 |

-

|

| 57 |

-

|

| 58 |

-

## Usage

|

| 59 |

-

You can use the models through Huggingface's Transformers library.

|

| 60 |

-

Check our Github repo for the evaluation code: [https://github.com/TIGER-AI-Lab/StructLM](https://github.com/TIGER-AI-Lab/StructLM)

|

| 61 |

-

|

| 62 |

-

|

| 63 |

-

## Prompt Format

|

| 64 |

-

|

| 65 |

-

**For this 7B model, the prompt format (different from 13B, 34B) is**

|

| 66 |

-

```

|

| 67 |

-

[INST] <<SYS>>

|

| 68 |

-

You are an AI assistant that specializes in analyzing and reasoning over structured information. You will be given a task, optionally with some structured knowledge input. Your answer must strictly adhere to the output format, if specified.

|

| 69 |

-

<</SYS>>

|

| 70 |

-

{instruction} [/INST]

|

| 71 |

-

```

|

| 72 |

-

|

| 73 |

-

To see concrete examples of this linearization, you can directly reference the 🤗 [SKGInstruct Dataset](https://huggingface.co/datasets/TIGER-Lab/SKGInstruct) (coming soon).

|

| 74 |

-

We will provide code for linearizing this data shortly.

|

| 75 |

-

|

| 76 |

-

|

| 77 |

-

A few examples:

|

| 78 |

-

|

| 79 |

-

**Tabular data**

|

| 80 |

-

```

|

| 81 |

-

col : day | kilometers row 1 : tuesday | 0 row 2 : wednesday | 0 row 3 : thursday | 4 row 4 : friday | 0 row 5 : saturday | 0

|

| 82 |

-

```

|

| 83 |

-

|

| 84 |

-

**Knowledge triples (dart)**

|

| 85 |

-

```

|

| 86 |

-

Hawaii Five-O : notes : Episode: The Flight of the Jewels | [TABLECONTEXT] : [title] : Jeff Daniels | [TABLECONTEXT] : title : Hawaii Five-O

|

| 87 |

-

```

|

| 88 |

-

|

| 89 |

-

**Knowledge graph schema (grailqa)**

|

| 90 |

-

```

|

| 91 |

-

top antiquark: m.094nrqp | physics.particle_antiparticle.self_antiparticle physics.particle_family physics.particle.antiparticle physics.particle_family.subclasses physics.subatomic_particle_generation physics.particle_family.particles physics.particle common.image.appears_in_topic_gallery physics.subatomic_particle_generation.particles physics.particle.family physics.particle_family.parent_class physics.particle_antiparticle physics.particle_antiparticle.particle physics.particle.generation

|

| 92 |

-

```

|

| 93 |

-

|

| 94 |

-

**Example input**

|

| 95 |

-

|

| 96 |

-

```

|

| 97 |

-

[INST] <<SYS>>

|

| 98 |

-

You are an AI assistant that specializes in analyzing and reasoning over structured information. You will be given a task, optionally with some structured knowledge input. Your answer must strictly adhere to the output format, if specified.

|

| 99 |

-

<</SYS>>

|

| 100 |

-

|

| 101 |

-

Use the information in the following table to solve the problem, choose between the choices if they are provided. table:

|

| 102 |

-

|

| 103 |

-

col : day | kilometers row 1 : tuesday | 0 row 2 : wednesday | 0 row 3 : thursday | 4 row 4 : friday | 0 row 5 : saturday | 0

|

| 104 |

-

|

| 105 |

-

|

| 106 |

-

question:

|

| 107 |

-

|

| 108 |

-

Allie kept track of how many kilometers she walked during the past 5 days. What is the range of the numbers? [/INST]

|

| 109 |

-

```

|

| 110 |

-

|

| 111 |

-

|

| 112 |

-

## Intended Uses

|

| 113 |

-

These models are trained for research purposes. They are designed to be proficient in interpreting linearized structured input. Downstream uses can potentially include various applications requiring the interpretation of structured data.

|

| 114 |

-

|

| 115 |

-

## Limitations

|

| 116 |

-

While we've tried to build an SKG-specialized model capable of generalizing, we have shown that this is a challenging domain, and it may lack performance characteristics that allow it to be directly used in chat or other applications.

|

| 117 |

-

|

| 118 |

-

|

| 119 |

-

## Citation

|

| 120 |

-

If you use the models, data, or code from this project, please cite the original paper:

|

| 121 |

-

|

| 122 |

-

```

|

| 123 |

-

@misc{zhuang2024structlm,

|

| 124 |

-

title={StructLM: Towards Building Generalist Models for Structured Knowledge Grounding},

|

| 125 |

-

author={Alex Zhuang and Ge Zhang and Tianyu Zheng and Xinrun Du and Junjie Wang and Weiming Ren and Stephen W. Huang and Jie Fu and Xiang Yue and Wenhu Chen},

|

| 126 |

-

year={2024},

|

| 127 |

-

eprint={2402.16671},

|

| 128 |

-

archivePrefix={arXiv},

|

| 129 |

-

primaryClass={cs.CL}

|

| 130 |

-

}

|

| 131 |

-

```

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

added_tokens.json

DELETED

|

@@ -1,10 +0,0 @@

|

|

| 1 |

-

{

|

| 2 |

-

"</s>": 2,

|

| 3 |

-

"<s>": 1,

|

| 4 |

-

"<unk>": 0,

|

| 5 |

-

"[PAD]": 32016,

|

| 6 |

-

"▁<EOT>": 32010,

|

| 7 |

-

"▁<MID>": 32009,

|

| 8 |

-

"▁<PRE>": 32007,

|

| 9 |

-

"▁<SUF>": 32008

|

| 10 |

-

}

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

model-00001-of-00002.safetensors

DELETED

|

@@ -1,3 +0,0 @@

|

|

| 1 |

-

version https://git-lfs.github.com/spec/v1

|

| 2 |

-

oid sha256:ffef76d4c866383aae3dbee7ad7644142e468290fbc3cd4ddcb4ed0a215b8f5a

|

| 3 |

-

size 9976709784

|

|

|

|

|

|

|

|

|

|

|

|

model-00002-of-00002.safetensors

DELETED

|

@@ -1,3 +0,0 @@

|

|

| 1 |

-

version https://git-lfs.github.com/spec/v1

|

| 2 |

-

oid sha256:7da24f857fb081fb4ccc93dd0d0ad522fd54d1d66bbf98545f1c4bb578604faa

|

| 3 |

-

size 3500433808

|

|

|

|

|

|

|

|

|

|

|

|

pytorch_model-00001-of-00002.bin

DELETED

|

@@ -1,3 +0,0 @@

|

|

| 1 |

-

version https://git-lfs.github.com/spec/v1

|

| 2 |

-

oid sha256:dce73d8f6da54b9d05af5463024c6ee74365bcfb57ea55e5e4c86b50b18f4cb4

|

| 3 |

-

size 9976759873

|

|

|

|

|

|

|

|

|

|

|

|

pytorch_model-00002-of-00002.bin

DELETED

|

@@ -1,3 +0,0 @@

|

|

| 1 |

-

version https://git-lfs.github.com/spec/v1

|

| 2 |

-

oid sha256:b39192802f2bcec3ad308796aa3bb2220b65c9fa5f83fd7bc024e39878138b2b

|

| 3 |

-

size 3500450526

|

|

|

|

|

|

|

|

|

|

|

|

pytorch_model.bin.index.json

DELETED

|

@@ -1,298 +0,0 @@

|

|

| 1 |

-

{

|

| 2 |

-

"metadata": {

|

| 3 |

-

"total_size": 13477109760

|

| 4 |

-

},

|

| 5 |

-

"weight_map": {

|

| 6 |

-

"lm_head.weight": "pytorch_model-00002-of-00002.bin",

|

| 7 |

-

"model.embed_tokens.weight": "pytorch_model-00001-of-00002.bin",

|

| 8 |

-

"model.layers.0.input_layernorm.weight": "pytorch_model-00001-of-00002.bin",

|

| 9 |

-

"model.layers.0.mlp.down_proj.weight": "pytorch_model-00001-of-00002.bin",

|

| 10 |

-

"model.layers.0.mlp.gate_proj.weight": "pytorch_model-00001-of-00002.bin",

|

| 11 |

-

"model.layers.0.mlp.up_proj.weight": "pytorch_model-00001-of-00002.bin",

|

| 12 |

-

"model.layers.0.post_attention_layernorm.weight": "pytorch_model-00001-of-00002.bin",

|

| 13 |

-

"model.layers.0.self_attn.k_proj.weight": "pytorch_model-00001-of-00002.bin",

|

| 14 |

-

"model.layers.0.self_attn.o_proj.weight": "pytorch_model-00001-of-00002.bin",

|

| 15 |

-

"model.layers.0.self_attn.q_proj.weight": "pytorch_model-00001-of-00002.bin",

|

| 16 |

-

"model.layers.0.self_attn.v_proj.weight": "pytorch_model-00001-of-00002.bin",

|

| 17 |

-

"model.layers.1.input_layernorm.weight": "pytorch_model-00001-of-00002.bin",

|

| 18 |

-

"model.layers.1.mlp.down_proj.weight": "pytorch_model-00001-of-00002.bin",

|

| 19 |

-

"model.layers.1.mlp.gate_proj.weight": "pytorch_model-00001-of-00002.bin",

|

| 20 |

-

"model.layers.1.mlp.up_proj.weight": "pytorch_model-00001-of-00002.bin",

|

| 21 |

-

"model.layers.1.post_attention_layernorm.weight": "pytorch_model-00001-of-00002.bin",

|

| 22 |

-

"model.layers.1.self_attn.k_proj.weight": "pytorch_model-00001-of-00002.bin",

|

| 23 |

-

"model.layers.1.self_attn.o_proj.weight": "pytorch_model-00001-of-00002.bin",

|

| 24 |

-

"model.layers.1.self_attn.q_proj.weight": "pytorch_model-00001-of-00002.bin",

|

| 25 |

-

"model.layers.1.self_attn.v_proj.weight": "pytorch_model-00001-of-00002.bin",

|

| 26 |

-

"model.layers.10.input_layernorm.weight": "pytorch_model-00001-of-00002.bin",

|

| 27 |

-

"model.layers.10.mlp.down_proj.weight": "pytorch_model-00001-of-00002.bin",

|

| 28 |

-

"model.layers.10.mlp.gate_proj.weight": "pytorch_model-00001-of-00002.bin",

|

| 29 |

-

"model.layers.10.mlp.up_proj.weight": "pytorch_model-00001-of-00002.bin",

|

| 30 |

-

"model.layers.10.post_attention_layernorm.weight": "pytorch_model-00001-of-00002.bin",

|

| 31 |

-

"model.layers.10.self_attn.k_proj.weight": "pytorch_model-00001-of-00002.bin",

|

| 32 |

-

"model.layers.10.self_attn.o_proj.weight": "pytorch_model-00001-of-00002.bin",

|

| 33 |

-

"model.layers.10.self_attn.q_proj.weight": "pytorch_model-00001-of-00002.bin",

|

| 34 |

-

"model.layers.10.self_attn.v_proj.weight": "pytorch_model-00001-of-00002.bin",

|

| 35 |

-

"model.layers.11.input_layernorm.weight": "pytorch_model-00001-of-00002.bin",

|

| 36 |

-

"model.layers.11.mlp.down_proj.weight": "pytorch_model-00001-of-00002.bin",

|

| 37 |

-

"model.layers.11.mlp.gate_proj.weight": "pytorch_model-00001-of-00002.bin",

|

| 38 |

-

"model.layers.11.mlp.up_proj.weight": "pytorch_model-00001-of-00002.bin",

|

| 39 |

-

"model.layers.11.post_attention_layernorm.weight": "pytorch_model-00001-of-00002.bin",

|

| 40 |

-

"model.layers.11.self_attn.k_proj.weight": "pytorch_model-00001-of-00002.bin",

|

| 41 |

-

"model.layers.11.self_attn.o_proj.weight": "pytorch_model-00001-of-00002.bin",

|

| 42 |

-

"model.layers.11.self_attn.q_proj.weight": "pytorch_model-00001-of-00002.bin",

|

| 43 |

-

"model.layers.11.self_attn.v_proj.weight": "pytorch_model-00001-of-00002.bin",

|

| 44 |

-

"model.layers.12.input_layernorm.weight": "pytorch_model-00001-of-00002.bin",

|

| 45 |

-

"model.layers.12.mlp.down_proj.weight": "pytorch_model-00001-of-00002.bin",

|

| 46 |

-

"model.layers.12.mlp.gate_proj.weight": "pytorch_model-00001-of-00002.bin",

|

| 47 |

-

"model.layers.12.mlp.up_proj.weight": "pytorch_model-00001-of-00002.bin",

|

| 48 |

-

"model.layers.12.post_attention_layernorm.weight": "pytorch_model-00001-of-00002.bin",

|

| 49 |

-

"model.layers.12.self_attn.k_proj.weight": "pytorch_model-00001-of-00002.bin",

|

| 50 |

-

"model.layers.12.self_attn.o_proj.weight": "pytorch_model-00001-of-00002.bin",

|

| 51 |

-

"model.layers.12.self_attn.q_proj.weight": "pytorch_model-00001-of-00002.bin",

|

| 52 |

-

"model.layers.12.self_attn.v_proj.weight": "pytorch_model-00001-of-00002.bin",

|

| 53 |

-

"model.layers.13.input_layernorm.weight": "pytorch_model-00001-of-00002.bin",

|

| 54 |

-

"model.layers.13.mlp.down_proj.weight": "pytorch_model-00001-of-00002.bin",

|

| 55 |

-

"model.layers.13.mlp.gate_proj.weight": "pytorch_model-00001-of-00002.bin",

|

| 56 |

-

"model.layers.13.mlp.up_proj.weight": "pytorch_model-00001-of-00002.bin",

|

| 57 |

-

"model.layers.13.post_attention_layernorm.weight": "pytorch_model-00001-of-00002.bin",

|

| 58 |

-

"model.layers.13.self_attn.k_proj.weight": "pytorch_model-00001-of-00002.bin",

|

| 59 |

-

"model.layers.13.self_attn.o_proj.weight": "pytorch_model-00001-of-00002.bin",

|

| 60 |

-

"model.layers.13.self_attn.q_proj.weight": "pytorch_model-00001-of-00002.bin",

|

| 61 |

-

"model.layers.13.self_attn.v_proj.weight": "pytorch_model-00001-of-00002.bin",

|

| 62 |

-

"model.layers.14.input_layernorm.weight": "pytorch_model-00001-of-00002.bin",

|

| 63 |

-

"model.layers.14.mlp.down_proj.weight": "pytorch_model-00001-of-00002.bin",

|

| 64 |

-

"model.layers.14.mlp.gate_proj.weight": "pytorch_model-00001-of-00002.bin",

|

| 65 |

-

"model.layers.14.mlp.up_proj.weight": "pytorch_model-00001-of-00002.bin",

|

| 66 |

-

"model.layers.14.post_attention_layernorm.weight": "pytorch_model-00001-of-00002.bin",

|

| 67 |

-

"model.layers.14.self_attn.k_proj.weight": "pytorch_model-00001-of-00002.bin",

|

| 68 |

-

"model.layers.14.self_attn.o_proj.weight": "pytorch_model-00001-of-00002.bin",

|

| 69 |

-

"model.layers.14.self_attn.q_proj.weight": "pytorch_model-00001-of-00002.bin",

|

| 70 |

-

"model.layers.14.self_attn.v_proj.weight": "pytorch_model-00001-of-00002.bin",

|

| 71 |

-

"model.layers.15.input_layernorm.weight": "pytorch_model-00001-of-00002.bin",

|

| 72 |

-

"model.layers.15.mlp.down_proj.weight": "pytorch_model-00001-of-00002.bin",

|

| 73 |

-

"model.layers.15.mlp.gate_proj.weight": "pytorch_model-00001-of-00002.bin",

|

| 74 |

-

"model.layers.15.mlp.up_proj.weight": "pytorch_model-00001-of-00002.bin",

|

| 75 |

-

"model.layers.15.post_attention_layernorm.weight": "pytorch_model-00001-of-00002.bin",

|

| 76 |

-

"model.layers.15.self_attn.k_proj.weight": "pytorch_model-00001-of-00002.bin",

|

| 77 |

-

"model.layers.15.self_attn.o_proj.weight": "pytorch_model-00001-of-00002.bin",

|

| 78 |

-

"model.layers.15.self_attn.q_proj.weight": "pytorch_model-00001-of-00002.bin",

|

| 79 |

-

"model.layers.15.self_attn.v_proj.weight": "pytorch_model-00001-of-00002.bin",

|

| 80 |

-

"model.layers.16.input_layernorm.weight": "pytorch_model-00001-of-00002.bin",

|

| 81 |

-

"model.layers.16.mlp.down_proj.weight": "pytorch_model-00001-of-00002.bin",

|

| 82 |

-

"model.layers.16.mlp.gate_proj.weight": "pytorch_model-00001-of-00002.bin",

|

| 83 |

-

"model.layers.16.mlp.up_proj.weight": "pytorch_model-00001-of-00002.bin",

|

| 84 |

-

"model.layers.16.post_attention_layernorm.weight": "pytorch_model-00001-of-00002.bin",

|

| 85 |

-

"model.layers.16.self_attn.k_proj.weight": "pytorch_model-00001-of-00002.bin",

|

| 86 |

-

"model.layers.16.self_attn.o_proj.weight": "pytorch_model-00001-of-00002.bin",

|

| 87 |

-

"model.layers.16.self_attn.q_proj.weight": "pytorch_model-00001-of-00002.bin",

|

| 88 |

-

"model.layers.16.self_attn.v_proj.weight": "pytorch_model-00001-of-00002.bin",

|

| 89 |

-

"model.layers.17.input_layernorm.weight": "pytorch_model-00001-of-00002.bin",

|

| 90 |

-

"model.layers.17.mlp.down_proj.weight": "pytorch_model-00001-of-00002.bin",

|

| 91 |

-

"model.layers.17.mlp.gate_proj.weight": "pytorch_model-00001-of-00002.bin",

|

| 92 |

-

"model.layers.17.mlp.up_proj.weight": "pytorch_model-00001-of-00002.bin",

|

| 93 |

-

"model.layers.17.post_attention_layernorm.weight": "pytorch_model-00001-of-00002.bin",

|

| 94 |

-

"model.layers.17.self_attn.k_proj.weight": "pytorch_model-00001-of-00002.bin",

|

| 95 |

-

"model.layers.17.self_attn.o_proj.weight": "pytorch_model-00001-of-00002.bin",

|

| 96 |

-

"model.layers.17.self_attn.q_proj.weight": "pytorch_model-00001-of-00002.bin",

|

| 97 |

-

"model.layers.17.self_attn.v_proj.weight": "pytorch_model-00001-of-00002.bin",

|

| 98 |

-

"model.layers.18.input_layernorm.weight": "pytorch_model-00001-of-00002.bin",

|

| 99 |

-

"model.layers.18.mlp.down_proj.weight": "pytorch_model-00001-of-00002.bin",

|

| 100 |

-

"model.layers.18.mlp.gate_proj.weight": "pytorch_model-00001-of-00002.bin",

|

| 101 |

-

"model.layers.18.mlp.up_proj.weight": "pytorch_model-00001-of-00002.bin",

|

| 102 |

-

"model.layers.18.post_attention_layernorm.weight": "pytorch_model-00001-of-00002.bin",

|

| 103 |

-

"model.layers.18.self_attn.k_proj.weight": "pytorch_model-00001-of-00002.bin",

|

| 104 |

-

"model.layers.18.self_attn.o_proj.weight": "pytorch_model-00001-of-00002.bin",

|

| 105 |

-

"model.layers.18.self_attn.q_proj.weight": "pytorch_model-00001-of-00002.bin",

|

| 106 |

-

"model.layers.18.self_attn.v_proj.weight": "pytorch_model-00001-of-00002.bin",

|

| 107 |

-

"model.layers.19.input_layernorm.weight": "pytorch_model-00001-of-00002.bin",

|

| 108 |

-

"model.layers.19.mlp.down_proj.weight": "pytorch_model-00001-of-00002.bin",

|

| 109 |

-

"model.layers.19.mlp.gate_proj.weight": "pytorch_model-00001-of-00002.bin",

|

| 110 |

-

"model.layers.19.mlp.up_proj.weight": "pytorch_model-00001-of-00002.bin",

|

| 111 |

-

"model.layers.19.post_attention_layernorm.weight": "pytorch_model-00001-of-00002.bin",

|

| 112 |

-

"model.layers.19.self_attn.k_proj.weight": "pytorch_model-00001-of-00002.bin",

|

| 113 |

-

"model.layers.19.self_attn.o_proj.weight": "pytorch_model-00001-of-00002.bin",

|

| 114 |

-

"model.layers.19.self_attn.q_proj.weight": "pytorch_model-00001-of-00002.bin",

|

| 115 |

-

"model.layers.19.self_attn.v_proj.weight": "pytorch_model-00001-of-00002.bin",

|

| 116 |

-

"model.layers.2.input_layernorm.weight": "pytorch_model-00001-of-00002.bin",

|

| 117 |

-

"model.layers.2.mlp.down_proj.weight": "pytorch_model-00001-of-00002.bin",

|

| 118 |

-

"model.layers.2.mlp.gate_proj.weight": "pytorch_model-00001-of-00002.bin",

|

| 119 |

-

"model.layers.2.mlp.up_proj.weight": "pytorch_model-00001-of-00002.bin",

|

| 120 |

-

"model.layers.2.post_attention_layernorm.weight": "pytorch_model-00001-of-00002.bin",

|

| 121 |

-

"model.layers.2.self_attn.k_proj.weight": "pytorch_model-00001-of-00002.bin",

|

| 122 |

-

"model.layers.2.self_attn.o_proj.weight": "pytorch_model-00001-of-00002.bin",

|

| 123 |

-

"model.layers.2.self_attn.q_proj.weight": "pytorch_model-00001-of-00002.bin",

|

| 124 |

-

"model.layers.2.self_attn.v_proj.weight": "pytorch_model-00001-of-00002.bin",

|

| 125 |

-

"model.layers.20.input_layernorm.weight": "pytorch_model-00001-of-00002.bin",

|

| 126 |

-

"model.layers.20.mlp.down_proj.weight": "pytorch_model-00001-of-00002.bin",

|

| 127 |

-

"model.layers.20.mlp.gate_proj.weight": "pytorch_model-00001-of-00002.bin",

|

| 128 |

-

"model.layers.20.mlp.up_proj.weight": "pytorch_model-00001-of-00002.bin",

|

| 129 |

-

"model.layers.20.post_attention_layernorm.weight": "pytorch_model-00001-of-00002.bin",

|

| 130 |

-

"model.layers.20.self_attn.k_proj.weight": "pytorch_model-00001-of-00002.bin",

|

| 131 |

-

"model.layers.20.self_attn.o_proj.weight": "pytorch_model-00001-of-00002.bin",

|

| 132 |

-

"model.layers.20.self_attn.q_proj.weight": "pytorch_model-00001-of-00002.bin",

|

| 133 |

-

"model.layers.20.self_attn.v_proj.weight": "pytorch_model-00001-of-00002.bin",

|

| 134 |

-

"model.layers.21.input_layernorm.weight": "pytorch_model-00001-of-00002.bin",

|

| 135 |

-

"model.layers.21.mlp.down_proj.weight": "pytorch_model-00001-of-00002.bin",

|

| 136 |

-

"model.layers.21.mlp.gate_proj.weight": "pytorch_model-00001-of-00002.bin",

|

| 137 |

-

"model.layers.21.mlp.up_proj.weight": "pytorch_model-00001-of-00002.bin",

|

| 138 |

-

"model.layers.21.post_attention_layernorm.weight": "pytorch_model-00001-of-00002.bin",

|

| 139 |

-

"model.layers.21.self_attn.k_proj.weight": "pytorch_model-00001-of-00002.bin",

|

| 140 |

-

"model.layers.21.self_attn.o_proj.weight": "pytorch_model-00001-of-00002.bin",

|

| 141 |

-

"model.layers.21.self_attn.q_proj.weight": "pytorch_model-00001-of-00002.bin",

|

| 142 |

-

"model.layers.21.self_attn.v_proj.weight": "pytorch_model-00001-of-00002.bin",

|

| 143 |

-

"model.layers.22.input_layernorm.weight": "pytorch_model-00001-of-00002.bin",

|

| 144 |

-

"model.layers.22.mlp.down_proj.weight": "pytorch_model-00001-of-00002.bin",

|

| 145 |

-

"model.layers.22.mlp.gate_proj.weight": "pytorch_model-00001-of-00002.bin",

|

| 146 |

-

"model.layers.22.mlp.up_proj.weight": "pytorch_model-00001-of-00002.bin",

|

| 147 |

-

"model.layers.22.post_attention_layernorm.weight": "pytorch_model-00001-of-00002.bin",

|

| 148 |

-

"model.layers.22.self_attn.k_proj.weight": "pytorch_model-00001-of-00002.bin",

|

| 149 |

-

"model.layers.22.self_attn.o_proj.weight": "pytorch_model-00001-of-00002.bin",

|

| 150 |

-

"model.layers.22.self_attn.q_proj.weight": "pytorch_model-00001-of-00002.bin",

|

| 151 |

-

"model.layers.22.self_attn.v_proj.weight": "pytorch_model-00001-of-00002.bin",

|

| 152 |

-

"model.layers.23.input_layernorm.weight": "pytorch_model-00001-of-00002.bin",

|

| 153 |

-

"model.layers.23.mlp.down_proj.weight": "pytorch_model-00001-of-00002.bin",

|

| 154 |

-

"model.layers.23.mlp.gate_proj.weight": "pytorch_model-00001-of-00002.bin",

|

| 155 |

-

"model.layers.23.mlp.up_proj.weight": "pytorch_model-00001-of-00002.bin",

|

| 156 |

-

"model.layers.23.post_attention_layernorm.weight": "pytorch_model-00001-of-00002.bin",

|

| 157 |

-

"model.layers.23.self_attn.k_proj.weight": "pytorch_model-00001-of-00002.bin",

|

| 158 |

-

"model.layers.23.self_attn.o_proj.weight": "pytorch_model-00001-of-00002.bin",

|

| 159 |

-

"model.layers.23.self_attn.q_proj.weight": "pytorch_model-00001-of-00002.bin",

|

| 160 |

-

"model.layers.23.self_attn.v_proj.weight": "pytorch_model-00001-of-00002.bin",

|

| 161 |

-

"model.layers.24.input_layernorm.weight": "pytorch_model-00002-of-00002.bin",

|

| 162 |

-

"model.layers.24.mlp.down_proj.weight": "pytorch_model-00002-of-00002.bin",

|

| 163 |

-

"model.layers.24.mlp.gate_proj.weight": "pytorch_model-00002-of-00002.bin",

|

| 164 |

-

"model.layers.24.mlp.up_proj.weight": "pytorch_model-00002-of-00002.bin",

|

| 165 |

-

"model.layers.24.post_attention_layernorm.weight": "pytorch_model-00002-of-00002.bin",

|

| 166 |

-

"model.layers.24.self_attn.k_proj.weight": "pytorch_model-00002-of-00002.bin",

|

| 167 |

-

"model.layers.24.self_attn.o_proj.weight": "pytorch_model-00002-of-00002.bin",

|

| 168 |

-

"model.layers.24.self_attn.q_proj.weight": "pytorch_model-00002-of-00002.bin",

|

| 169 |

-

"model.layers.24.self_attn.v_proj.weight": "pytorch_model-00002-of-00002.bin",

|

| 170 |

-

"model.layers.25.input_layernorm.weight": "pytorch_model-00002-of-00002.bin",

|

| 171 |

-

"model.layers.25.mlp.down_proj.weight": "pytorch_model-00002-of-00002.bin",

|

| 172 |

-

"model.layers.25.mlp.gate_proj.weight": "pytorch_model-00002-of-00002.bin",

|

| 173 |

-

"model.layers.25.mlp.up_proj.weight": "pytorch_model-00002-of-00002.bin",

|

| 174 |

-

"model.layers.25.post_attention_layernorm.weight": "pytorch_model-00002-of-00002.bin",

|

| 175 |

-

"model.layers.25.self_attn.k_proj.weight": "pytorch_model-00002-of-00002.bin",

|

| 176 |

-

"model.layers.25.self_attn.o_proj.weight": "pytorch_model-00002-of-00002.bin",

|

| 177 |

-

"model.layers.25.self_attn.q_proj.weight": "pytorch_model-00002-of-00002.bin",

|

| 178 |

-

"model.layers.25.self_attn.v_proj.weight": "pytorch_model-00002-of-00002.bin",

|

| 179 |

-

"model.layers.26.input_layernorm.weight": "pytorch_model-00002-of-00002.bin",

|

| 180 |

-

"model.layers.26.mlp.down_proj.weight": "pytorch_model-00002-of-00002.bin",

|

| 181 |

-

"model.layers.26.mlp.gate_proj.weight": "pytorch_model-00002-of-00002.bin",

|

| 182 |

-

"model.layers.26.mlp.up_proj.weight": "pytorch_model-00002-of-00002.bin",

|

| 183 |

-

"model.layers.26.post_attention_layernorm.weight": "pytorch_model-00002-of-00002.bin",

|

| 184 |

-

"model.layers.26.self_attn.k_proj.weight": "pytorch_model-00002-of-00002.bin",

|

| 185 |

-

"model.layers.26.self_attn.o_proj.weight": "pytorch_model-00002-of-00002.bin",

|

| 186 |

-

"model.layers.26.self_attn.q_proj.weight": "pytorch_model-00002-of-00002.bin",

|

| 187 |

-

"model.layers.26.self_attn.v_proj.weight": "pytorch_model-00002-of-00002.bin",

|

| 188 |

-

"model.layers.27.input_layernorm.weight": "pytorch_model-00002-of-00002.bin",

|

| 189 |

-

"model.layers.27.mlp.down_proj.weight": "pytorch_model-00002-of-00002.bin",

|

| 190 |

-

"model.layers.27.mlp.gate_proj.weight": "pytorch_model-00002-of-00002.bin",

|

| 191 |

-

"model.layers.27.mlp.up_proj.weight": "pytorch_model-00002-of-00002.bin",

|

| 192 |

-

"model.layers.27.post_attention_layernorm.weight": "pytorch_model-00002-of-00002.bin",

|

| 193 |

-

"model.layers.27.self_attn.k_proj.weight": "pytorch_model-00002-of-00002.bin",

|

| 194 |

-

"model.layers.27.self_attn.o_proj.weight": "pytorch_model-00002-of-00002.bin",

|

| 195 |

-

"model.layers.27.self_attn.q_proj.weight": "pytorch_model-00002-of-00002.bin",

|

| 196 |

-

"model.layers.27.self_attn.v_proj.weight": "pytorch_model-00002-of-00002.bin",

|

| 197 |

-

"model.layers.28.input_layernorm.weight": "pytorch_model-00002-of-00002.bin",

|

| 198 |

-

"model.layers.28.mlp.down_proj.weight": "pytorch_model-00002-of-00002.bin",

|

| 199 |

-

"model.layers.28.mlp.gate_proj.weight": "pytorch_model-00002-of-00002.bin",

|

| 200 |

-

"model.layers.28.mlp.up_proj.weight": "pytorch_model-00002-of-00002.bin",

|

| 201 |

-

"model.layers.28.post_attention_layernorm.weight": "pytorch_model-00002-of-00002.bin",

|

| 202 |

-

"model.layers.28.self_attn.k_proj.weight": "pytorch_model-00002-of-00002.bin",

|

| 203 |

-

"model.layers.28.self_attn.o_proj.weight": "pytorch_model-00002-of-00002.bin",

|

| 204 |

-

"model.layers.28.self_attn.q_proj.weight": "pytorch_model-00002-of-00002.bin",

|

| 205 |

-

"model.layers.28.self_attn.v_proj.weight": "pytorch_model-00002-of-00002.bin",

|

| 206 |

-

"model.layers.29.input_layernorm.weight": "pytorch_model-00002-of-00002.bin",

|

| 207 |

-

"model.layers.29.mlp.down_proj.weight": "pytorch_model-00002-of-00002.bin",

|

| 208 |

-

"model.layers.29.mlp.gate_proj.weight": "pytorch_model-00002-of-00002.bin",

|

| 209 |

-

"model.layers.29.mlp.up_proj.weight": "pytorch_model-00002-of-00002.bin",

|

| 210 |

-

"model.layers.29.post_attention_layernorm.weight": "pytorch_model-00002-of-00002.bin",

|

| 211 |

-

"model.layers.29.self_attn.k_proj.weight": "pytorch_model-00002-of-00002.bin",

|

| 212 |

-

"model.layers.29.self_attn.o_proj.weight": "pytorch_model-00002-of-00002.bin",

|

| 213 |

-

"model.layers.29.self_attn.q_proj.weight": "pytorch_model-00002-of-00002.bin",

|

| 214 |

-

"model.layers.29.self_attn.v_proj.weight": "pytorch_model-00002-of-00002.bin",

|

| 215 |

-

"model.layers.3.input_layernorm.weight": "pytorch_model-00001-of-00002.bin",

|

| 216 |

-

"model.layers.3.mlp.down_proj.weight": "pytorch_model-00001-of-00002.bin",

|

| 217 |

-

"model.layers.3.mlp.gate_proj.weight": "pytorch_model-00001-of-00002.bin",

|

| 218 |

-

"model.layers.3.mlp.up_proj.weight": "pytorch_model-00001-of-00002.bin",

|

| 219 |

-

"model.layers.3.post_attention_layernorm.weight": "pytorch_model-00001-of-00002.bin",

|

| 220 |

-

"model.layers.3.self_attn.k_proj.weight": "pytorch_model-00001-of-00002.bin",

|

| 221 |

-

"model.layers.3.self_attn.o_proj.weight": "pytorch_model-00001-of-00002.bin",

|

| 222 |

-

"model.layers.3.self_attn.q_proj.weight": "pytorch_model-00001-of-00002.bin",

|

| 223 |

-

"model.layers.3.self_attn.v_proj.weight": "pytorch_model-00001-of-00002.bin",

|

| 224 |

-

"model.layers.30.input_layernorm.weight": "pytorch_model-00002-of-00002.bin",

|

| 225 |

-

"model.layers.30.mlp.down_proj.weight": "pytorch_model-00002-of-00002.bin",

|

| 226 |

-

"model.layers.30.mlp.gate_proj.weight": "pytorch_model-00002-of-00002.bin",

|

| 227 |

-

"model.layers.30.mlp.up_proj.weight": "pytorch_model-00002-of-00002.bin",

|

| 228 |

-

"model.layers.30.post_attention_layernorm.weight": "pytorch_model-00002-of-00002.bin",

|

| 229 |

-

"model.layers.30.self_attn.k_proj.weight": "pytorch_model-00002-of-00002.bin",

|

| 230 |

-

"model.layers.30.self_attn.o_proj.weight": "pytorch_model-00002-of-00002.bin",

|

| 231 |

-

"model.layers.30.self_attn.q_proj.weight": "pytorch_model-00002-of-00002.bin",

|

| 232 |

-

"model.layers.30.self_attn.v_proj.weight": "pytorch_model-00002-of-00002.bin",

|

| 233 |

-

"model.layers.31.input_layernorm.weight": "pytorch_model-00002-of-00002.bin",

|

| 234 |

-

"model.layers.31.mlp.down_proj.weight": "pytorch_model-00002-of-00002.bin",

|

| 235 |

-

"model.layers.31.mlp.gate_proj.weight": "pytorch_model-00002-of-00002.bin",

|

| 236 |

-

"model.layers.31.mlp.up_proj.weight": "pytorch_model-00002-of-00002.bin",

|

| 237 |

-

"model.layers.31.post_attention_layernorm.weight": "pytorch_model-00002-of-00002.bin",

|

| 238 |

-

"model.layers.31.self_attn.k_proj.weight": "pytorch_model-00002-of-00002.bin",

|

| 239 |

-

"model.layers.31.self_attn.o_proj.weight": "pytorch_model-00002-of-00002.bin",

|

| 240 |

-

"model.layers.31.self_attn.q_proj.weight": "pytorch_model-00002-of-00002.bin",

|

| 241 |

-

"model.layers.31.self_attn.v_proj.weight": "pytorch_model-00002-of-00002.bin",

|

| 242 |

-

"model.layers.4.input_layernorm.weight": "pytorch_model-00001-of-00002.bin",

|

| 243 |

-

"model.layers.4.mlp.down_proj.weight": "pytorch_model-00001-of-00002.bin",

|

| 244 |

-

"model.layers.4.mlp.gate_proj.weight": "pytorch_model-00001-of-00002.bin",

|

| 245 |

-

"model.layers.4.mlp.up_proj.weight": "pytorch_model-00001-of-00002.bin",

|

| 246 |

-

"model.layers.4.post_attention_layernorm.weight": "pytorch_model-00001-of-00002.bin",

|

| 247 |

-

"model.layers.4.self_attn.k_proj.weight": "pytorch_model-00001-of-00002.bin",

|

| 248 |

-

"model.layers.4.self_attn.o_proj.weight": "pytorch_model-00001-of-00002.bin",

|

| 249 |

-

"model.layers.4.self_attn.q_proj.weight": "pytorch_model-00001-of-00002.bin",

|

| 250 |

-

"model.layers.4.self_attn.v_proj.weight": "pytorch_model-00001-of-00002.bin",

|

| 251 |

-

"model.layers.5.input_layernorm.weight": "pytorch_model-00001-of-00002.bin",

|

| 252 |

-

"model.layers.5.mlp.down_proj.weight": "pytorch_model-00001-of-00002.bin",

|

| 253 |

-

"model.layers.5.mlp.gate_proj.weight": "pytorch_model-00001-of-00002.bin",

|

| 254 |

-

"model.layers.5.mlp.up_proj.weight": "pytorch_model-00001-of-00002.bin",

|

| 255 |

-

"model.layers.5.post_attention_layernorm.weight": "pytorch_model-00001-of-00002.bin",

|

| 256 |

-

"model.layers.5.self_attn.k_proj.weight": "pytorch_model-00001-of-00002.bin",

|

| 257 |

-

"model.layers.5.self_attn.o_proj.weight": "pytorch_model-00001-of-00002.bin",

|

| 258 |

-

"model.layers.5.self_attn.q_proj.weight": "pytorch_model-00001-of-00002.bin",

|

| 259 |

-

"model.layers.5.self_attn.v_proj.weight": "pytorch_model-00001-of-00002.bin",

|

| 260 |

-

"model.layers.6.input_layernorm.weight": "pytorch_model-00001-of-00002.bin",

|

| 261 |

-

"model.layers.6.mlp.down_proj.weight": "pytorch_model-00001-of-00002.bin",

|

| 262 |

-

"model.layers.6.mlp.gate_proj.weight": "pytorch_model-00001-of-00002.bin",

|

| 263 |

-

"model.layers.6.mlp.up_proj.weight": "pytorch_model-00001-of-00002.bin",

|

| 264 |

-

"model.layers.6.post_attention_layernorm.weight": "pytorch_model-00001-of-00002.bin",

|

| 265 |

-

"model.layers.6.self_attn.k_proj.weight": "pytorch_model-00001-of-00002.bin",

|

| 266 |

-

"model.layers.6.self_attn.o_proj.weight": "pytorch_model-00001-of-00002.bin",

|

| 267 |

-

"model.layers.6.self_attn.q_proj.weight": "pytorch_model-00001-of-00002.bin",

|

| 268 |

-

"model.layers.6.self_attn.v_proj.weight": "pytorch_model-00001-of-00002.bin",

|

| 269 |

-

"model.layers.7.input_layernorm.weight": "pytorch_model-00001-of-00002.bin",

|

| 270 |

-

"model.layers.7.mlp.down_proj.weight": "pytorch_model-00001-of-00002.bin",

|

| 271 |

-

"model.layers.7.mlp.gate_proj.weight": "pytorch_model-00001-of-00002.bin",

|

| 272 |

-

"model.layers.7.mlp.up_proj.weight": "pytorch_model-00001-of-00002.bin",

|

| 273 |

-

"model.layers.7.post_attention_layernorm.weight": "pytorch_model-00001-of-00002.bin",

|

| 274 |

-

"model.layers.7.self_attn.k_proj.weight": "pytorch_model-00001-of-00002.bin",

|

| 275 |

-

"model.layers.7.self_attn.o_proj.weight": "pytorch_model-00001-of-00002.bin",

|

| 276 |

-

"model.layers.7.self_attn.q_proj.weight": "pytorch_model-00001-of-00002.bin",

|

| 277 |

-

"model.layers.7.self_attn.v_proj.weight": "pytorch_model-00001-of-00002.bin",

|

| 278 |

-

"model.layers.8.input_layernorm.weight": "pytorch_model-00001-of-00002.bin",

|

| 279 |

-

"model.layers.8.mlp.down_proj.weight": "pytorch_model-00001-of-00002.bin",

|

| 280 |

-

"model.layers.8.mlp.gate_proj.weight": "pytorch_model-00001-of-00002.bin",

|

| 281 |

-

"model.layers.8.mlp.up_proj.weight": "pytorch_model-00001-of-00002.bin",

|

| 282 |

-

"model.layers.8.post_attention_layernorm.weight": "pytorch_model-00001-of-00002.bin",

|

| 283 |

-

"model.layers.8.self_attn.k_proj.weight": "pytorch_model-00001-of-00002.bin",

|

| 284 |

-

"model.layers.8.self_attn.o_proj.weight": "pytorch_model-00001-of-00002.bin",

|

| 285 |

-

"model.layers.8.self_attn.q_proj.weight": "pytorch_model-00001-of-00002.bin",

|

| 286 |

-

"model.layers.8.self_attn.v_proj.weight": "pytorch_model-00001-of-00002.bin",

|

| 287 |

-

"model.layers.9.input_layernorm.weight": "pytorch_model-00001-of-00002.bin",

|

| 288 |

-

"model.layers.9.mlp.down_proj.weight": "pytorch_model-00001-of-00002.bin",

|

| 289 |

-

"model.layers.9.mlp.gate_proj.weight": "pytorch_model-00001-of-00002.bin",

|

| 290 |

-

"model.layers.9.mlp.up_proj.weight": "pytorch_model-00001-of-00002.bin",

|

| 291 |

-

"model.layers.9.post_attention_layernorm.weight": "pytorch_model-00001-of-00002.bin",

|

| 292 |

-

"model.layers.9.self_attn.k_proj.weight": "pytorch_model-00001-of-00002.bin",

|

| 293 |

-

"model.layers.9.self_attn.o_proj.weight": "pytorch_model-00001-of-00002.bin",

|

| 294 |

-

"model.layers.9.self_attn.q_proj.weight": "pytorch_model-00001-of-00002.bin",

|

| 295 |

-

"model.layers.9.self_attn.v_proj.weight": "pytorch_model-00001-of-00002.bin",

|

| 296 |

-

"model.norm.weight": "pytorch_model-00002-of-00002.bin"

|

| 297 |

-

}

|

| 298 |

-

}

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|