Upload folder using huggingface_hub

Browse files- .gitattributes +1 -0

- README.md +261 -0

- added_tokens.json +28 -0

- chat_template.jinja +86 -0

- config.json +152 -0

- generation_config.json +14 -0

- merges.txt +0 -0

- model.safetensors +3 -0

- special_tokens_map.json +31 -0

- tokenizer.json +3 -0

- tokenizer_config.json +241 -0

- vocab.json +0 -0

.gitattributes

CHANGED

|

@@ -33,3 +33,4 @@ saved_model/**/* filter=lfs diff=lfs merge=lfs -text

|

|

| 33 |

*.zip filter=lfs diff=lfs merge=lfs -text

|

| 34 |

*.zst filter=lfs diff=lfs merge=lfs -text

|

| 35 |

*tfevents* filter=lfs diff=lfs merge=lfs -text

|

|

|

|

|

|

| 33 |

*.zip filter=lfs diff=lfs merge=lfs -text

|

| 34 |

*.zst filter=lfs diff=lfs merge=lfs -text

|

| 35 |

*tfevents* filter=lfs diff=lfs merge=lfs -text

|

| 36 |

+

tokenizer.json filter=lfs diff=lfs merge=lfs -text

|

README.md

ADDED

|

@@ -0,0 +1,261 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

---

|

| 2 |

+

tags:

|

| 3 |

+

- unsloth

|

| 4 |

+

library_name: transformers

|

| 5 |

+

license: apache-2.0

|

| 6 |

+

license_link: https://huggingface.co/Qwen/Qwen3-4B-Thinking-2507-FP8/blob/main/LICENSE

|

| 7 |

+

pipeline_tag: text-generation

|

| 8 |

+

base_model:

|

| 9 |

+

- Qwen/Qwen3-4B-Thinking-2507-FP8

|

| 10 |

+

---

|

| 11 |

+

<div>

|

| 12 |

+

<p style="margin-top: 0;margin-bottom: 0;">

|

| 13 |

+

<em><a href="https://docs.unsloth.ai/basics/unsloth-dynamic-v2.0-gguf">Unsloth Dynamic 2.0</a> achieves superior accuracy & outperforms other leading quants.</em>

|

| 14 |

+

</p>

|

| 15 |

+

<div style="display: flex; gap: 5px; align-items: center; ">

|

| 16 |

+

<a href="https://github.com/unslothai/unsloth/">

|

| 17 |

+

<img src="https://github.com/unslothai/unsloth/raw/main/images/unsloth%20new%20logo.png" width="133">

|

| 18 |

+

</a>

|

| 19 |

+

<a href="https://discord.gg/unsloth">

|

| 20 |

+

<img src="https://github.com/unslothai/unsloth/raw/main/images/Discord%20button.png" width="173">

|

| 21 |

+

</a>

|

| 22 |

+

<a href="https://docs.unsloth.ai/">

|

| 23 |

+

<img src="https://raw.githubusercontent.com/unslothai/unsloth/refs/heads/main/images/documentation%20green%20button.png" width="143">

|

| 24 |

+

</a>

|

| 25 |

+

</div>

|

| 26 |

+

</div>

|

| 27 |

+

|

| 28 |

+

|

| 29 |

+

# Qwen3-4B-Thinking-2507-FP8

|

| 30 |

+

<a href="https://chat.qwen.ai/" target="_blank" style="margin: 2px;">

|

| 31 |

+

<img alt="Chat" src="https://img.shields.io/badge/%F0%9F%92%9C%EF%B8%8F%20Qwen%20Chat%20-536af5" style="display: inline-block; vertical-align: middle;"/>

|

| 32 |

+

</a>

|

| 33 |

+

|

| 34 |

+

## Highlights

|

| 35 |

+

|

| 36 |

+

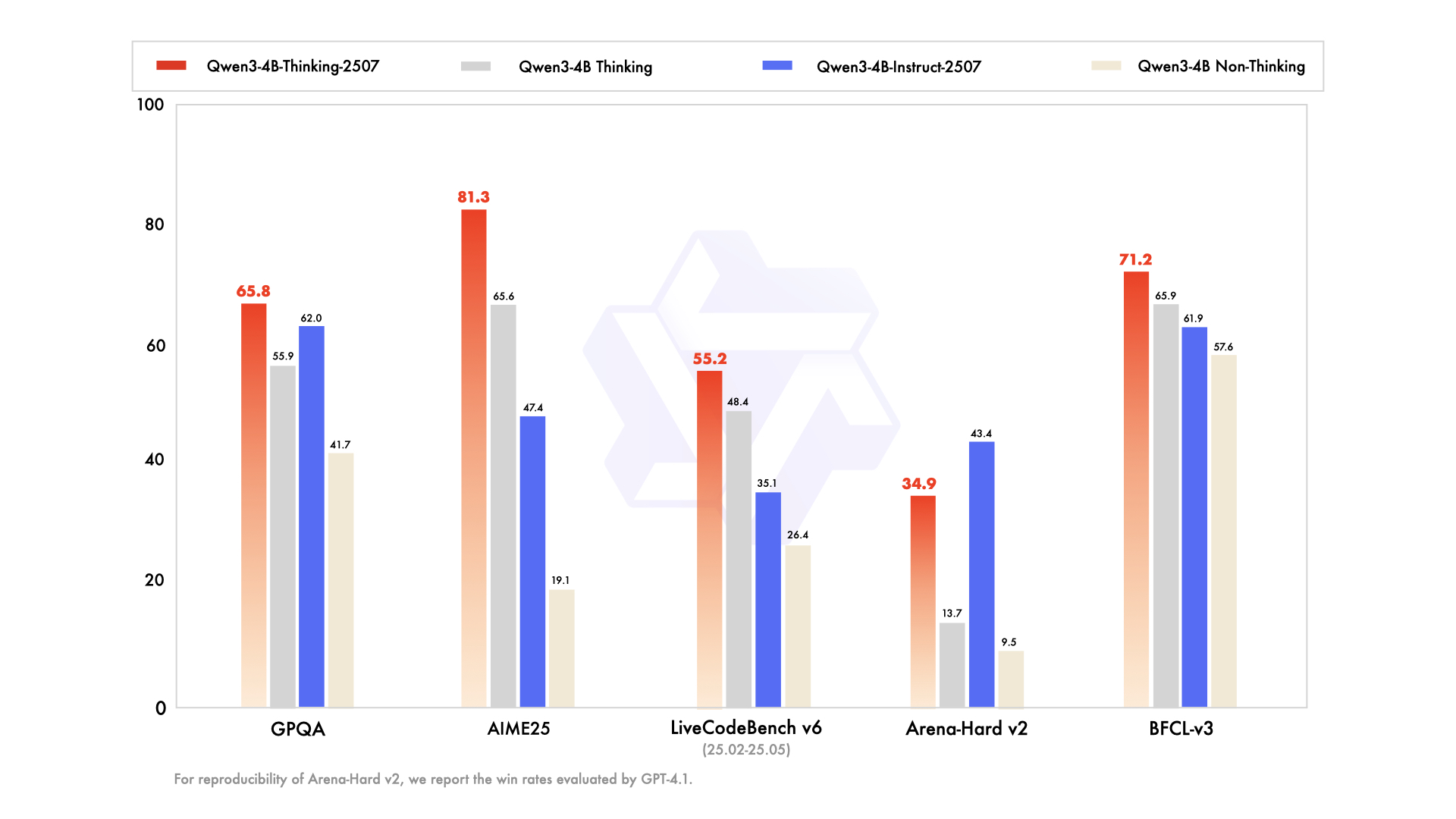

Over the past three months, we have continued to scale the **thinking capability** of Qwen3-4B, improving both the **quality and depth** of reasoning. We are pleased to introduce **Qwen3-4B-Thinking-2507-FP8**, featuring the following key enhancements:

|

| 37 |

+

|

| 38 |

+

- **Significantly improved performance** on reasoning tasks, including logical reasoning, mathematics, science, coding, and academic benchmarks that typically require human expertise.

|

| 39 |

+

- **Markedly better general capabilities**, such as instruction following, tool usage, text generation, and alignment with human preferences.

|

| 40 |

+

- **Enhanced 256K long-context understanding** capabilities.

|

| 41 |

+

|

| 42 |

+

**NOTE**: This version has an increased thinking length. We strongly recommend its use in highly complex reasoning tasks.

|

| 43 |

+

|

| 44 |

+

|

| 45 |

+

|

| 46 |

+

## Model Overview

|

| 47 |

+

|

| 48 |

+

This repo contains the FP8 version of **Qwen3-4B-Thinking-2507**, which has the following features:

|

| 49 |

+

- Type: Causal Language Models

|

| 50 |

+

- Training Stage: Pretraining & Post-training

|

| 51 |

+

- Number of Parameters: 4.0B

|

| 52 |

+

- Number of Paramaters (Non-Embedding): 3.6B

|

| 53 |

+

- Number of Layers: 36

|

| 54 |

+

- Number of Attention Heads (GQA): 32 for Q and 8 for KV

|

| 55 |

+

- Context Length: **262,144 natively**.

|

| 56 |

+

|

| 57 |

+

**NOTE: This model supports only thinking mode. Meanwhile, specifying `enable_thinking=True` is no longer required.**

|

| 58 |

+

|

| 59 |

+

Additionally, to enforce model thinking, the default chat template automatically includes `<think>`. Therefore, it is normal for the model's output to contain only `</think>` without an explicit opening `<think>` tag.

|

| 60 |

+

|

| 61 |

+

For more details, including benchmark evaluation, hardware requirements, and inference performance, please refer to our [blog](https://qwenlm.github.io/blog/qwen3/), [GitHub](https://github.com/QwenLM/Qwen3), and [Documentation](https://qwen.readthedocs.io/en/latest/).

|

| 62 |

+

|

| 63 |

+

|

| 64 |

+

## Performance

|

| 65 |

+

|

| 66 |

+

|

| 67 |

+

| | Qwen3-30B-A3B Thinking | Qwen3-4B Thinking | Qwen3-4B-Thinking-2507 |

|

| 68 |

+

|--- | --- | --- | --- |

|

| 69 |

+

| **Knowledge** | | |

|

| 70 |

+

| MMLU-Pro | **78.5** | 70.4 | 74.0 |

|

| 71 |

+

| MMLU-Redux | **89.5** | 83.7 | 86.1 |

|

| 72 |

+

| GPQA | **65.8** | 55.9 | **65.8** |

|

| 73 |

+

| SuperGPQA | **51.8** | 42.7 | 47.8 |

|

| 74 |

+

| **Reasoning** | | |

|

| 75 |

+

| AIME25 | 70.9 | 65.6 | **81.3** |

|

| 76 |

+

| HMMT25 | 49.8 | 42.1 | **55.5** |

|

| 77 |

+

| LiveBench 20241125 | **74.3** | 63.6 | 71.8 |

|

| 78 |

+

| **Coding** | | |

|

| 79 |

+

| LiveCodeBench v6 (25.02-25.05) | **57.4** | 48.4 | 55.2 |

|

| 80 |

+

| CFEval | **1940** | 1671 | 1852 |

|

| 81 |

+

| OJBench | **20.7** | 16.1 | 17.9 |

|

| 82 |

+

| **Alignment** | | |

|

| 83 |

+

| IFEval | 86.5 | 81.9 | **87.4** |

|

| 84 |

+

| Arena-Hard v2$ | **36.3** | 13.7 | 34.9 |

|

| 85 |

+

| Creative Writing v3 | **79.1** | 61.1 | 75.6 |

|

| 86 |

+

| WritingBench | 77.0 | 73.5 | **83.3** |

|

| 87 |

+

| **Agent** | | |

|

| 88 |

+

| BFCL-v3 | 69.1 | 65.9 | **71.2** |

|

| 89 |

+

| TAU1-Retail | 61.7 | 33.9 | **66.1** |

|

| 90 |

+

| TAU1-Airline | 32.0 | 32.0 | **48.0** |

|

| 91 |

+

| TAU2-Retail | 34.2 | 38.6 | **53.5** |

|

| 92 |

+

| TAU2-Airline | 36.0 | 28.0 | **58.0** |

|

| 93 |

+

| TAU2-Telecom | 22.8 | 17.5 | **27.2** |

|

| 94 |

+

| **Multilingualism** | | |

|

| 95 |

+

| MultiIF | 72.2 | 66.3 | **77.3** |

|

| 96 |

+

| MMLU-ProX | **73.1** | 61.0 | 64.2 |

|

| 97 |

+

| INCLUDE | **71.9** | 61.8 | 64.4 |

|

| 98 |

+

| PolyMATH | 46.1 | 40.0 | **46.2** |

|

| 99 |

+

|

| 100 |

+

$ For reproducibility, we report the win rates evaluated by GPT-4.1.

|

| 101 |

+

|

| 102 |

+

\& For highly challenging tasks (including PolyMATH and all reasoning and coding tasks), we use an output length of 81,920 tokens. For all other tasks, we set the output length to 32,768.

|

| 103 |

+

|

| 104 |

+

## Quickstart

|

| 105 |

+

|

| 106 |

+

The code of Qwen3 has been in the latest Hugging Face `transformers` and we advise you to use the latest version of `transformers`.

|

| 107 |

+

|

| 108 |

+

With `transformers<4.51.0`, you will encounter the following error:

|

| 109 |

+

```

|

| 110 |

+

KeyError: 'qwen3'

|

| 111 |

+

```

|

| 112 |

+

|

| 113 |

+

The following contains a code snippet illustrating how to use the model generate content based on given inputs.

|

| 114 |

+

```python

|

| 115 |

+

from transformers import AutoModelForCausalLM, AutoTokenizer

|

| 116 |

+

|

| 117 |

+

model_name = "Qwen/Qwen3-4B-Thinking-2507-FP8"

|

| 118 |

+

|

| 119 |

+

# load the tokenizer and the model

|

| 120 |

+

tokenizer = AutoTokenizer.from_pretrained(model_name)

|

| 121 |

+

model = AutoModelForCausalLM.from_pretrained(

|

| 122 |

+

model_name,

|

| 123 |

+

torch_dtype="auto",

|

| 124 |

+

device_map="auto"

|

| 125 |

+

)

|

| 126 |

+

|

| 127 |

+

# prepare the model input

|

| 128 |

+

prompt = "Give me a short introduction to large language model."

|

| 129 |

+

messages = [

|

| 130 |

+

{"role": "user", "content": prompt}

|

| 131 |

+

]

|

| 132 |

+

text = tokenizer.apply_chat_template(

|

| 133 |

+

messages,

|

| 134 |

+

tokenize=False,

|

| 135 |

+

add_generation_prompt=True,

|

| 136 |

+

)

|

| 137 |

+

model_inputs = tokenizer([text], return_tensors="pt").to(model.device)

|

| 138 |

+

|

| 139 |

+

# conduct text completion

|

| 140 |

+

generated_ids = model.generate(

|

| 141 |

+

**model_inputs,

|

| 142 |

+

max_new_tokens=32768

|

| 143 |

+

)

|

| 144 |

+

output_ids = generated_ids[0][len(model_inputs.input_ids[0]):].tolist()

|

| 145 |

+

|

| 146 |

+

# parsing thinking content

|

| 147 |

+

try:

|

| 148 |

+

# rindex finding 151668 (</think>)

|

| 149 |

+

index = len(output_ids) - output_ids[::-1].index(151668)

|

| 150 |

+

except ValueError:

|

| 151 |

+

index = 0

|

| 152 |

+

|

| 153 |

+

thinking_content = tokenizer.decode(output_ids[:index], skip_special_tokens=True).strip("\n")

|

| 154 |

+

content = tokenizer.decode(output_ids[index:], skip_special_tokens=True).strip("\n")

|

| 155 |

+

|

| 156 |

+

print("thinking content:", thinking_content) # no opening <think> tag

|

| 157 |

+

print("content:", content)

|

| 158 |

+

|

| 159 |

+

```

|

| 160 |

+

|

| 161 |

+

For deployment, you can use `sglang>=0.4.6.post1` or `vllm>=0.8.5` or to create an OpenAI-compatible API endpoint:

|

| 162 |

+

- SGLang:

|

| 163 |

+

```shell

|

| 164 |

+

python -m sglang.launch_server --model-path Qwen/Qwen3-4B-Thinking-2507-FP8 --context-length 262144 --reasoning-parser deepseek-r1

|

| 165 |

+

```

|

| 166 |

+

- vLLM:

|

| 167 |

+

```shell

|

| 168 |

+

vllm serve Qwen/Qwen3-4B-Thinking-2507-FP8 --max-model-len 262144 --enable-reasoning --reasoning-parser deepseek_r1

|

| 169 |

+

```

|

| 170 |

+

|

| 171 |

+

**Note: If you encounter out-of-memory (OOM) issues, you may consider reducing the context length to a smaller value. However, since the model may require longer token sequences for reasoning, we strongly recommend using a context length greater than 131,072 when possible.**

|

| 172 |

+

|

| 173 |

+

For local use, applications such as Ollama, LMStudio, MLX-LM, llama.cpp, and KTransformers have also supported Qwen3.

|

| 174 |

+

|

| 175 |

+

## Note on FP8

|

| 176 |

+

|

| 177 |

+

For convenience and performance, we have provided `fp8`-quantized model checkpoint for Qwen3, whose name ends with `-FP8`. The quantization method is fine-grained `fp8` quantization with block size of 128. You can find more details in the `quantization_config` field in `config.json`.

|

| 178 |

+

|

| 179 |

+

You can use the Qwen3-4B-Thinking-2507-FP8 model with serveral inference frameworks, including `transformers`, `sglang`, and `vllm`, as the original bfloat16 model.

|

| 180 |

+

|

| 181 |

+

## Agentic Use

|

| 182 |

+

|

| 183 |

+

Qwen3 excels in tool calling capabilities. We recommend using [Qwen-Agent](https://github.com/QwenLM/Qwen-Agent) to make the best use of agentic ability of Qwen3. Qwen-Agent encapsulates tool-calling templates and tool-calling parsers internally, greatly reducing coding complexity.

|

| 184 |

+

|

| 185 |

+

To define the available tools, you can use the MCP configuration file, use the integrated tool of Qwen-Agent, or integrate other tools by yourself.

|

| 186 |

+

```python

|

| 187 |

+

from qwen_agent.agents import Assistant

|

| 188 |

+

|

| 189 |

+

# Define LLM

|

| 190 |

+

# Using OpenAI-compatible API endpoint. It is recommended to disable the reasoning and the tool call parsing

|

| 191 |

+

# functionality of the deployment frameworks and let Qwen-Agent automate the related operations. For example,

|

| 192 |

+

# `VLLM_USE_MODELSCOPE=true vllm serve Qwen/Qwen3-4B-Thinking-2507-FP8 --served-model-name Qwen3-4B-Thinking-2507 --max-model-len 262144`.

|

| 193 |

+

llm_cfg = {

|

| 194 |

+

'model': 'Qwen3-4B-Thinking-2507',

|

| 195 |

+

|

| 196 |

+

# Use a custom endpoint compatible with OpenAI API:

|

| 197 |

+

'model_server': 'http://localhost:8000/v1', # api_base without reasoning and tool call parsing

|

| 198 |

+

'api_key': 'EMPTY',

|

| 199 |

+

'generate_cfg': {

|

| 200 |

+

'thought_in_content': True,

|

| 201 |

+

},

|

| 202 |

+

}

|

| 203 |

+

|

| 204 |

+

# Define Tools

|

| 205 |

+

tools = [

|

| 206 |

+

{'mcpServers': { # You can specify the MCP configuration file

|

| 207 |

+

'time': {

|

| 208 |

+

'command': 'uvx',

|

| 209 |

+

'args': ['mcp-server-time', '--local-timezone=Asia/Shanghai']

|

| 210 |

+

},

|

| 211 |

+

"fetch": {

|

| 212 |

+

"command": "uvx",

|

| 213 |

+

"args": ["mcp-server-fetch"]

|

| 214 |

+

}

|

| 215 |

+

}

|

| 216 |

+

},

|

| 217 |

+

'code_interpreter', # Built-in tools

|

| 218 |

+

]

|

| 219 |

+

|

| 220 |

+

# Define Agent

|

| 221 |

+

bot = Assistant(llm=llm_cfg, function_list=tools)

|

| 222 |

+

|

| 223 |

+

# Streaming generation

|

| 224 |

+

messages = [{'role': 'user', 'content': 'https://qwenlm.github.io/blog/ Introduce the latest developments of Qwen'}]

|

| 225 |

+

for responses in bot.run(messages=messages):

|

| 226 |

+

pass

|

| 227 |

+

print(responses)

|

| 228 |

+

```

|

| 229 |

+

|

| 230 |

+

## Best Practices

|

| 231 |

+

|

| 232 |

+

To achieve optimal performance, we recommend the following settings:

|

| 233 |

+

|

| 234 |

+

1. **Sampling Parameters**:

|

| 235 |

+

- We suggest using `Temperature=0.6`, `TopP=0.95`, `TopK=20`, and `MinP=0`.

|

| 236 |

+

- For supported frameworks, you can adjust the `presence_penalty` parameter between 0 and 2 to reduce endless repetitions. However, using a higher value may occasionally result in language mixing and a slight decrease in model performance.

|

| 237 |

+

|

| 238 |

+

2. **Adequate Output Length**: We recommend using an output length of 32,768 tokens for most queries. For benchmarking on highly complex problems, such as those found in math and programming competitions, we suggest setting the max output length to 81,920 tokens. This provides the model with sufficient space to generate detailed and comprehensive responses, thereby enhancing its overall performance.

|

| 239 |

+

|

| 240 |

+

3. **Standardize Output Format**: We recommend using prompts to standardize model outputs when benchmarking.

|

| 241 |

+

- **Math Problems**: Include "Please reason step by step, and put your final answer within \boxed{}." in the prompt.

|

| 242 |

+

- **Multiple-Choice Questions**: Add the following JSON structure to the prompt to standardize responses: "Please show your choice in the `answer` field with only the choice letter, e.g., `"answer": "C"`."

|

| 243 |

+

|

| 244 |

+

4. **No Thinking Content in History**: In multi-turn conversations, the historical model output should only include the final output part and does not need to include the thinking content. It is implemented in the provided chat template in Jinja2. However, for frameworks that do not directly use the Jinja2 chat template, it is up to the developers to ensure that the best practice is followed.

|

| 245 |

+

|

| 246 |

+

|

| 247 |

+

### Citation

|

| 248 |

+

|

| 249 |

+

If you find our work helpful, feel free to give us a cite.

|

| 250 |

+

|

| 251 |

+

```

|

| 252 |

+

@misc{qwen3technicalreport,

|

| 253 |

+

title={Qwen3 Technical Report},

|

| 254 |

+

author={Qwen Team},

|

| 255 |

+

year={2025},

|

| 256 |

+

eprint={2505.09388},

|

| 257 |

+

archivePrefix={arXiv},

|

| 258 |

+

primaryClass={cs.CL},

|

| 259 |

+

url={https://arxiv.org/abs/2505.09388},

|

| 260 |

+

}

|

| 261 |

+

```

|

added_tokens.json

ADDED

|

@@ -0,0 +1,28 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

{

|

| 2 |

+

"</think>": 151668,

|

| 3 |

+

"</tool_call>": 151658,

|

| 4 |

+

"</tool_response>": 151666,

|

| 5 |

+

"<think>": 151667,

|

| 6 |

+

"<tool_call>": 151657,

|

| 7 |

+

"<tool_response>": 151665,

|

| 8 |

+

"<|box_end|>": 151649,

|

| 9 |

+

"<|box_start|>": 151648,

|

| 10 |

+

"<|endoftext|>": 151643,

|

| 11 |

+

"<|file_sep|>": 151664,

|

| 12 |

+

"<|fim_middle|>": 151660,

|

| 13 |

+

"<|fim_pad|>": 151662,

|

| 14 |

+

"<|fim_prefix|>": 151659,

|

| 15 |

+

"<|fim_suffix|>": 151661,

|

| 16 |

+

"<|im_end|>": 151645,

|

| 17 |

+

"<|im_start|>": 151644,

|

| 18 |

+

"<|image_pad|>": 151655,

|

| 19 |

+

"<|object_ref_end|>": 151647,

|

| 20 |

+

"<|object_ref_start|>": 151646,

|

| 21 |

+

"<|quad_end|>": 151651,

|

| 22 |

+

"<|quad_start|>": 151650,

|

| 23 |

+

"<|repo_name|>": 151663,

|

| 24 |

+

"<|video_pad|>": 151656,

|

| 25 |

+

"<|vision_end|>": 151653,

|

| 26 |

+

"<|vision_pad|>": 151654,

|

| 27 |

+

"<|vision_start|>": 151652

|

| 28 |

+

}

|

chat_template.jinja

ADDED

|

@@ -0,0 +1,86 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

{%- if tools %}

|

| 2 |

+

{{- '<|im_start|>system\n' }}

|

| 3 |

+

{%- if messages[0].role == 'system' %}

|

| 4 |

+

{{- messages[0].content + '\n\n' }}

|

| 5 |

+

{%- endif %}

|

| 6 |

+

{{- "# Tools\n\nYou may call one or more functions to assist with the user query.\n\nYou are provided with function signatures within <tools></tools> XML tags:\n<tools>" }}

|

| 7 |

+

{%- for tool in tools %}

|

| 8 |

+

{{- "\n" }}

|

| 9 |

+

{{- tool | tojson }}

|

| 10 |

+

{%- endfor %}

|

| 11 |

+

{{- "\n</tools>\n\nFor each function call, return a json object with function name and arguments within <tool_call></tool_call> XML tags:\n<tool_call>\n{\"name\": <function-name>, \"arguments\": <args-json-object>}\n</tool_call><|im_end|>\n" }}

|

| 12 |

+

{%- else %}

|

| 13 |

+

{%- if messages[0].role == 'system' %}

|

| 14 |

+

{{- '<|im_start|>system\n' + messages[0].content + '<|im_end|>\n' }}

|

| 15 |

+

{%- endif %}

|

| 16 |

+

{%- endif %}

|

| 17 |

+

{%- set ns = namespace(multi_step_tool=true, last_query_index=messages|length - 1) %}

|

| 18 |

+

{%- for message in messages[::-1] %}

|

| 19 |

+

{%- set index = (messages|length - 1) - loop.index0 %}

|

| 20 |

+

{%- if ns.multi_step_tool and message.role == "user" and message.content is string and not(message.content.startswith('<tool_response>') and message.content.endswith('</tool_response>')) %}

|

| 21 |

+

{%- set ns.multi_step_tool = false %}

|

| 22 |

+

{%- set ns.last_query_index = index %}

|

| 23 |

+

{%- endif %}

|

| 24 |

+

{%- endfor %}

|

| 25 |

+

{%- for message in messages %}

|

| 26 |

+

{%- if message.content is string %}

|

| 27 |

+

{%- set content = message.content %}

|

| 28 |

+

{%- else %}

|

| 29 |

+

{%- set content = '' %}

|

| 30 |

+

{%- endif %}

|

| 31 |

+

{%- if (message.role == "user") or (message.role == "system" and not loop.first) %}

|

| 32 |

+

{{- '<|im_start|>' + message.role + '\n' + content + '<|im_end|>' + '\n' }}

|

| 33 |

+

{%- elif message.role == "assistant" %}

|

| 34 |

+

{%- set reasoning_content = '' %}

|

| 35 |

+

{%- if message.reasoning_content is string %}

|

| 36 |

+

{%- set reasoning_content = message.reasoning_content %}

|

| 37 |

+

{%- else %}

|

| 38 |

+

{%- if '</think>' in content %}

|

| 39 |

+

{%- set reasoning_content = content.split('</think>')[0].rstrip('\n').split('<think>')[-1].lstrip('\n') %}

|

| 40 |

+

{%- set content = content.split('</think>')[-1].lstrip('\n') %}

|

| 41 |

+

{%- endif %}

|

| 42 |

+

{%- endif %}

|

| 43 |

+

{%- if loop.index0 > ns.last_query_index %}

|

| 44 |

+

{%- if loop.last or (not loop.last and reasoning_content) %}

|

| 45 |

+

{{- '<|im_start|>' + message.role + '\n<think>\n' + reasoning_content.strip('\n') + '\n</think>\n\n' + content.lstrip('\n') }}

|

| 46 |

+

{%- else %}

|

| 47 |

+

{{- '<|im_start|>' + message.role + '\n' + content }}

|

| 48 |

+

{%- endif %}

|

| 49 |

+

{%- else %}

|

| 50 |

+

{{- '<|im_start|>' + message.role + '\n' + content }}

|

| 51 |

+

{%- endif %}

|

| 52 |

+

{%- if message.tool_calls %}

|

| 53 |

+

{%- for tool_call in message.tool_calls %}

|

| 54 |

+

{%- if (loop.first and content) or (not loop.first) %}

|

| 55 |

+

{{- '\n' }}

|

| 56 |

+

{%- endif %}

|

| 57 |

+

{%- if tool_call.function %}

|

| 58 |

+

{%- set tool_call = tool_call.function %}

|

| 59 |

+

{%- endif %}

|

| 60 |

+

{{- '<tool_call>\n{"name": "' }}

|

| 61 |

+

{{- tool_call.name }}

|

| 62 |

+

{{- '", "arguments": ' }}

|

| 63 |

+

{%- if tool_call.arguments is string %}

|

| 64 |

+

{{- tool_call.arguments }}

|

| 65 |

+

{%- else %}

|

| 66 |

+

{{- tool_call.arguments | tojson }}

|

| 67 |

+

{%- endif %}

|

| 68 |

+

{{- '}\n</tool_call>' }}

|

| 69 |

+

{%- endfor %}

|

| 70 |

+

{%- endif %}

|

| 71 |

+

{{- '<|im_end|>\n' }}

|

| 72 |

+

{%- elif message.role == "tool" %}

|

| 73 |

+

{%- if loop.first or (messages[loop.index0 - 1].role != "tool") %}

|

| 74 |

+

{{- '<|im_start|>user' }}

|

| 75 |

+

{%- endif %}

|

| 76 |

+

{{- '\n<tool_response>\n' }}

|

| 77 |

+

{{- content }}

|

| 78 |

+

{{- '\n</tool_response>' }}

|

| 79 |

+

{%- if loop.last or (messages[loop.index0 + 1].role != "tool") %}

|

| 80 |

+

{{- '<|im_end|>\n' }}

|

| 81 |

+

{%- endif %}

|

| 82 |

+

{%- endif %}

|

| 83 |

+

{%- endfor %}

|

| 84 |

+

{%- if add_generation_prompt %}

|

| 85 |

+

{{- '<|im_start|>assistant\n<think>\n' }}

|

| 86 |

+

{%- endif %}

|

config.json

ADDED

|

@@ -0,0 +1,152 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

{

|

| 2 |

+

"architectures": [

|

| 3 |

+

"Qwen3ForCausalLM"

|

| 4 |

+

],

|

| 5 |

+

"attention_bias": false,

|

| 6 |

+

"attention_dropout": 0.0,

|

| 7 |

+

"torch_dtype": "bfloat16",

|

| 8 |

+

"eos_token_id": 151645,

|

| 9 |

+

"head_dim": 128,

|

| 10 |

+

"hidden_act": "silu",

|

| 11 |

+

"hidden_size": 2560,

|

| 12 |

+

"initializer_range": 0.02,

|

| 13 |

+

"intermediate_size": 9728,

|

| 14 |

+

"layer_types": [

|

| 15 |

+

"full_attention",

|

| 16 |

+

"full_attention",

|

| 17 |

+

"full_attention",

|

| 18 |

+

"full_attention",

|

| 19 |

+

"full_attention",

|

| 20 |

+

"full_attention",

|

| 21 |

+

"full_attention",

|

| 22 |

+

"full_attention",

|

| 23 |

+

"full_attention",

|

| 24 |

+

"full_attention",

|

| 25 |

+

"full_attention",

|

| 26 |

+

"full_attention",

|

| 27 |

+

"full_attention",

|

| 28 |

+

"full_attention",

|

| 29 |

+

"full_attention",

|

| 30 |

+

"full_attention",

|

| 31 |

+

"full_attention",

|

| 32 |

+

"full_attention",

|

| 33 |

+

"full_attention",

|

| 34 |

+

"full_attention",

|

| 35 |

+

"full_attention",

|

| 36 |

+

"full_attention",

|

| 37 |

+

"full_attention",

|

| 38 |

+

"full_attention",

|

| 39 |

+

"full_attention",

|

| 40 |

+

"full_attention",

|

| 41 |

+

"full_attention",

|

| 42 |

+

"full_attention",

|

| 43 |

+

"full_attention",

|

| 44 |

+

"full_attention",

|

| 45 |

+

"full_attention",

|

| 46 |

+

"full_attention",

|

| 47 |

+

"full_attention",

|

| 48 |

+

"full_attention",

|

| 49 |

+

"full_attention",

|

| 50 |

+

"full_attention"

|

| 51 |

+

],

|

| 52 |

+

"max_position_embeddings": 262144,

|

| 53 |

+

"max_window_layers": 36,

|

| 54 |

+

"model_type": "qwen3",

|

| 55 |

+

"num_attention_heads": 32,

|

| 56 |

+

"num_hidden_layers": 36,

|

| 57 |

+

"num_key_value_heads": 8,

|

| 58 |

+

"pad_token_id": 151654,

|

| 59 |

+

"quantization_config": {

|

| 60 |

+

"activation_scheme": "dynamic",

|

| 61 |

+

"modules_to_not_convert": [

|

| 62 |

+

"lm_head",

|

| 63 |

+

"model.layers.0.input_layernorm",

|

| 64 |

+

"model.layers.0.post_attention_layernorm",

|

| 65 |

+

"model.layers.1.input_layernorm",

|

| 66 |

+

"model.layers.1.post_attention_layernorm",

|

| 67 |

+

"model.layers.2.input_layernorm",

|

| 68 |

+

"model.layers.2.post_attention_layernorm",

|

| 69 |

+

"model.layers.3.input_layernorm",

|

| 70 |

+

"model.layers.3.post_attention_layernorm",

|

| 71 |

+

"model.layers.4.input_layernorm",

|

| 72 |

+

"model.layers.4.post_attention_layernorm",

|

| 73 |

+

"model.layers.5.input_layernorm",

|

| 74 |

+

"model.layers.5.post_attention_layernorm",

|

| 75 |

+

"model.layers.6.input_layernorm",

|

| 76 |

+

"model.layers.6.post_attention_layernorm",

|

| 77 |

+

"model.layers.7.input_layernorm",

|

| 78 |

+

"model.layers.7.post_attention_layernorm",

|

| 79 |

+

"model.layers.8.input_layernorm",

|

| 80 |

+

"model.layers.8.post_attention_layernorm",

|

| 81 |

+

"model.layers.9.input_layernorm",

|

| 82 |

+

"model.layers.9.post_attention_layernorm",

|

| 83 |

+

"model.layers.10.input_layernorm",

|

| 84 |

+

"model.layers.10.post_attention_layernorm",

|

| 85 |

+

"model.layers.11.input_layernorm",

|

| 86 |

+

"model.layers.11.post_attention_layernorm",

|

| 87 |

+

"model.layers.12.input_layernorm",

|

| 88 |

+

"model.layers.12.post_attention_layernorm",

|

| 89 |

+

"model.layers.13.input_layernorm",

|

| 90 |

+

"model.layers.13.post_attention_layernorm",

|

| 91 |

+

"model.layers.14.input_layernorm",

|

| 92 |

+

"model.layers.14.post_attention_layernorm",

|

| 93 |

+

"model.layers.15.input_layernorm",

|

| 94 |

+

"model.layers.15.post_attention_layernorm",

|

| 95 |

+

"model.layers.16.input_layernorm",

|

| 96 |

+

"model.layers.16.post_attention_layernorm",

|

| 97 |

+

"model.layers.17.input_layernorm",

|

| 98 |

+

"model.layers.17.post_attention_layernorm",

|

| 99 |

+

"model.layers.18.input_layernorm",

|

| 100 |

+

"model.layers.18.post_attention_layernorm",

|

| 101 |

+

"model.layers.19.input_layernorm",

|

| 102 |

+

"model.layers.19.post_attention_layernorm",

|

| 103 |

+

"model.layers.20.input_layernorm",

|

| 104 |

+

"model.layers.20.post_attention_layernorm",

|

| 105 |

+

"model.layers.21.input_layernorm",

|

| 106 |

+

"model.layers.21.post_attention_layernorm",

|

| 107 |

+

"model.layers.22.input_layernorm",

|

| 108 |

+

"model.layers.22.post_attention_layernorm",

|

| 109 |

+

"model.layers.23.input_layernorm",

|

| 110 |

+

"model.layers.23.post_attention_layernorm",

|

| 111 |

+

"model.layers.24.input_layernorm",

|

| 112 |

+

"model.layers.24.post_attention_layernorm",

|

| 113 |

+

"model.layers.25.input_layernorm",

|

| 114 |

+

"model.layers.25.post_attention_layernorm",

|

| 115 |

+

"model.layers.26.input_layernorm",

|

| 116 |

+

"model.layers.26.post_attention_layernorm",

|

| 117 |

+

"model.layers.27.input_layernorm",

|

| 118 |

+

"model.layers.27.post_attention_layernorm",

|

| 119 |

+

"model.layers.28.input_layernorm",

|

| 120 |

+

"model.layers.28.post_attention_layernorm",

|

| 121 |

+

"model.layers.29.input_layernorm",

|

| 122 |

+

"model.layers.29.post_attention_layernorm",

|

| 123 |

+

"model.layers.30.input_layernorm",

|

| 124 |

+

"model.layers.30.post_attention_layernorm",

|

| 125 |

+

"model.layers.31.input_layernorm",

|

| 126 |

+

"model.layers.31.post_attention_layernorm",

|

| 127 |

+

"model.layers.32.input_layernorm",

|

| 128 |

+

"model.layers.32.post_attention_layernorm",

|

| 129 |

+

"model.layers.33.input_layernorm",

|

| 130 |

+

"model.layers.33.post_attention_layernorm",

|

| 131 |

+

"model.layers.34.input_layernorm",

|

| 132 |

+

"model.layers.34.post_attention_layernorm",

|

| 133 |

+

"model.layers.35.input_layernorm",

|

| 134 |

+

"model.layers.35.post_attention_layernorm"

|

| 135 |

+

],

|

| 136 |

+

"quant_method": "fp8",

|

| 137 |

+

"weight_block_size": [

|

| 138 |

+

128,

|

| 139 |

+

128

|

| 140 |

+

]

|

| 141 |

+

},

|

| 142 |

+

"rms_norm_eps": 1e-06,

|

| 143 |

+

"rope_scaling": null,

|

| 144 |

+

"rope_theta": 5000000,

|

| 145 |

+

"sliding_window": null,

|

| 146 |

+

"tie_word_embeddings": true,

|

| 147 |

+

"transformers_version": "4.57.1",

|

| 148 |

+

"unsloth_fixed": true,

|

| 149 |

+

"use_cache": true,

|

| 150 |

+

"use_sliding_window": false,

|

| 151 |

+

"vocab_size": 151936

|

| 152 |

+

}

|

generation_config.json

ADDED

|

@@ -0,0 +1,14 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

{

|

| 2 |

+

"bos_token_id": 151643,

|

| 3 |

+

"do_sample": true,

|

| 4 |

+

"eos_token_id": [

|

| 5 |

+

151645,

|

| 6 |

+

151643

|

| 7 |

+

],

|

| 8 |

+

"max_length": 262144,

|

| 9 |

+

"pad_token_id": 151654,

|

| 10 |

+

"temperature": 0.6,

|

| 11 |

+

"top_k": 20,

|

| 12 |

+

"top_p": 0.95,

|

| 13 |

+

"transformers_version": "4.57.1"

|

| 14 |

+

}

|

merges.txt

ADDED

|

The diff for this file is too large to render.

See raw diff

|

|

|

model.safetensors

ADDED

|

@@ -0,0 +1,3 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

version https://git-lfs.github.com/spec/v1

|

| 2 |

+

oid sha256:2b95c10a1938ed8c0e0a64b544dd32fa6ef870f3174976377cc95c4fb14ac0f9

|

| 3 |

+

size 4412140424

|

special_tokens_map.json

ADDED

|

@@ -0,0 +1,31 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

{

|

| 2 |

+

"additional_special_tokens": [

|

| 3 |

+

"<|im_start|>",

|

| 4 |

+

"<|im_end|>",

|

| 5 |

+

"<|object_ref_start|>",

|

| 6 |

+

"<|object_ref_end|>",

|

| 7 |

+

"<|box_start|>",

|

| 8 |

+

"<|box_end|>",

|

| 9 |

+

"<|quad_start|>",

|

| 10 |

+

"<|quad_end|>",

|

| 11 |

+

"<|vision_start|>",

|

| 12 |

+

"<|vision_end|>",

|

| 13 |

+

"<|vision_pad|>",

|

| 14 |

+

"<|image_pad|>",

|

| 15 |

+

"<|video_pad|>"

|

| 16 |

+

],

|

| 17 |

+

"eos_token": {

|

| 18 |

+

"content": "<|im_end|>",

|

| 19 |

+

"lstrip": false,

|

| 20 |

+

"normalized": false,

|

| 21 |

+

"rstrip": false,

|

| 22 |

+

"single_word": false

|

| 23 |

+

},

|

| 24 |

+

"pad_token": {

|

| 25 |

+

"content": "<|vision_pad|>",

|

| 26 |

+

"lstrip": false,

|

| 27 |

+

"normalized": false,

|

| 28 |

+

"rstrip": false,

|

| 29 |

+

"single_word": false

|

| 30 |

+

}

|

| 31 |

+

}

|

tokenizer.json

ADDED

|

@@ -0,0 +1,3 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

version https://git-lfs.github.com/spec/v1

|

| 2 |

+

oid sha256:aeb13307a71acd8fe81861d94ad54ab689df773318809eed3cbe794b4492dae4

|

| 3 |

+

size 11422654

|

tokenizer_config.json

ADDED

|

@@ -0,0 +1,241 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

{

|

| 2 |

+

"add_bos_token": false,

|

| 3 |

+

"add_prefix_space": false,

|

| 4 |

+

"added_tokens_decoder": {

|

| 5 |

+

"151643": {

|

| 6 |

+

"content": "<|endoftext|>",

|

| 7 |

+

"lstrip": false,

|

| 8 |

+

"normalized": false,

|

| 9 |

+

"rstrip": false,

|

| 10 |

+

"single_word": false,

|

| 11 |

+

"special": true

|

| 12 |

+

},

|

| 13 |

+

"151644": {

|

| 14 |

+

"content": "<|im_start|>",

|

| 15 |

+

"lstrip": false,

|

| 16 |

+

"normalized": false,

|

| 17 |

+

"rstrip": false,

|

| 18 |

+

"single_word": false,

|

| 19 |

+

"special": true

|

| 20 |

+

},

|

| 21 |

+

"151645": {

|

| 22 |

+

"content": "<|im_end|>",

|

| 23 |

+

"lstrip": false,

|

| 24 |

+

"normalized": false,

|

| 25 |

+

"rstrip": false,

|

| 26 |

+

"single_word": false,

|

| 27 |

+

"special": true

|

| 28 |

+

},

|

| 29 |

+

"151646": {

|

| 30 |

+

"content": "<|object_ref_start|>",

|

| 31 |

+

"lstrip": false,

|

| 32 |

+

"normalized": false,

|

| 33 |

+

"rstrip": false,

|

| 34 |

+

"single_word": false,

|

| 35 |

+

"special": true

|

| 36 |

+

},

|

| 37 |

+

"151647": {

|

| 38 |

+

"content": "<|object_ref_end|>",

|

| 39 |

+

"lstrip": false,

|

| 40 |

+

"normalized": false,

|

| 41 |

+

"rstrip": false,

|

| 42 |

+

"single_word": false,

|

| 43 |

+

"special": true

|

| 44 |

+

},

|

| 45 |

+

"151648": {

|

| 46 |

+

"content": "<|box_start|>",

|

| 47 |

+

"lstrip": false,

|

| 48 |

+

"normalized": false,

|

| 49 |

+

"rstrip": false,

|

| 50 |

+

"single_word": false,

|

| 51 |

+

"special": true

|

| 52 |

+

},

|

| 53 |

+

"151649": {

|

| 54 |

+

"content": "<|box_end|>",

|

| 55 |

+

"lstrip": false,

|

| 56 |

+

"normalized": false,

|

| 57 |

+

"rstrip": false,

|

| 58 |

+

"single_word": false,

|

| 59 |

+

"special": true

|

| 60 |

+

},

|

| 61 |

+

"151650": {

|

| 62 |

+

"content": "<|quad_start|>",

|

| 63 |

+

"lstrip": false,

|

| 64 |

+

"normalized": false,

|

| 65 |

+

"rstrip": false,

|

| 66 |

+

"single_word": false,

|

| 67 |

+

"special": true

|

| 68 |

+

},

|

| 69 |

+

"151651": {

|

| 70 |

+

"content": "<|quad_end|>",

|

| 71 |

+

"lstrip": false,

|

| 72 |

+

"normalized": false,

|

| 73 |

+

"rstrip": false,

|

| 74 |

+

"single_word": false,

|

| 75 |

+

"special": true

|

| 76 |

+

},

|

| 77 |

+

"151652": {

|

| 78 |

+

"content": "<|vision_start|>",

|

| 79 |

+

"lstrip": false,

|

| 80 |

+

"normalized": false,

|

| 81 |

+

"rstrip": false,

|

| 82 |

+

"single_word": false,

|

| 83 |

+

"special": true

|

| 84 |

+

},

|

| 85 |

+

"151653": {

|

| 86 |

+

"content": "<|vision_end|>",

|

| 87 |

+

"lstrip": false,

|

| 88 |

+

"normalized": false,

|

| 89 |

+

"rstrip": false,

|

| 90 |

+

"single_word": false,

|

| 91 |

+

"special": true

|

| 92 |

+

},

|

| 93 |

+

"151654": {

|

| 94 |

+

"content": "<|vision_pad|>",

|

| 95 |

+

"lstrip": false,

|

| 96 |

+

"normalized": false,

|

| 97 |

+

"rstrip": false,

|

| 98 |

+

"single_word": false,

|

| 99 |

+

"special": true

|

| 100 |

+

},

|

| 101 |

+

"151655": {

|

| 102 |

+

"content": "<|image_pad|>",

|

| 103 |

+

"lstrip": false,

|

| 104 |

+

"normalized": false,

|

| 105 |

+

"rstrip": false,

|

| 106 |

+

"single_word": false,

|

| 107 |

+

"special": true

|

| 108 |

+

},

|

| 109 |

+

"151656": {

|

| 110 |

+

"content": "<|video_pad|>",

|

| 111 |

+

"lstrip": false,

|

| 112 |

+

"normalized": false,

|

| 113 |

+

"rstrip": false,

|

| 114 |

+

"single_word": false,

|

| 115 |

+

"special": true

|

| 116 |

+

},

|

| 117 |

+

"151657": {

|

| 118 |

+

"content": "<tool_call>",

|

| 119 |

+

"lstrip": false,

|

| 120 |

+

"normalized": false,

|

| 121 |

+

"rstrip": false,

|

| 122 |

+

"single_word": false,

|

| 123 |

+

"special": false

|

| 124 |

+

},

|

| 125 |

+

"151658": {

|

| 126 |

+

"content": "</tool_call>",

|

| 127 |

+

"lstrip": false,

|

| 128 |

+

"normalized": false,

|

| 129 |

+

"rstrip": false,

|

| 130 |

+

"single_word": false,

|

| 131 |

+

"special": false

|

| 132 |

+

},

|

| 133 |

+

"151659": {

|

| 134 |

+

"content": "<|fim_prefix|>",

|

| 135 |

+

"lstrip": false,

|

| 136 |

+

"normalized": false,

|

| 137 |

+

"rstrip": false,

|

| 138 |

+

"single_word": false,

|

| 139 |

+

"special": false

|

| 140 |

+

},

|

| 141 |

+

"151660": {

|

| 142 |

+

"content": "<|fim_middle|>",

|

| 143 |

+

"lstrip": false,

|

| 144 |

+

"normalized": false,

|

| 145 |

+

"rstrip": false,

|

| 146 |

+

"single_word": false,

|

| 147 |

+

"special": false

|

| 148 |

+

},

|

| 149 |

+

"151661": {

|

| 150 |

+

"content": "<|fim_suffix|>",

|

| 151 |

+

"lstrip": false,

|

| 152 |

+

"normalized": false,

|

| 153 |

+

"rstrip": false,

|

| 154 |

+

"single_word": false,

|

| 155 |

+

"special": false

|

| 156 |

+

},

|

| 157 |

+

"151662": {

|

| 158 |

+

"content": "<|fim_pad|>",

|

| 159 |

+

"lstrip": false,

|

| 160 |

+

"normalized": false,

|

| 161 |

+

"rstrip": false,

|

| 162 |

+

"single_word": false,

|

| 163 |

+

"special": false

|

| 164 |

+

},

|

| 165 |

+

"151663": {

|

| 166 |

+

"content": "<|repo_name|>",

|

| 167 |

+

"lstrip": false,

|

| 168 |

+

"normalized": false,

|

| 169 |

+

"rstrip": false,

|

| 170 |

+

"single_word": false,

|

| 171 |

+

"special": false

|

| 172 |

+

},

|

| 173 |

+

"151664": {

|

| 174 |

+

"content": "<|file_sep|>",

|

| 175 |

+

"lstrip": false,

|

| 176 |

+

"normalized": false,

|

| 177 |

+

"rstrip": false,

|

| 178 |

+

"single_word": false,

|

| 179 |

+

"special": false

|

| 180 |

+

},

|

| 181 |

+

"151665": {

|

| 182 |

+

"content": "<tool_response>",

|

| 183 |

+

"lstrip": false,

|

| 184 |

+

"normalized": false,

|

| 185 |

+

"rstrip": false,

|

| 186 |

+

"single_word": false,

|

| 187 |

+

"special": false

|

| 188 |

+

},

|

| 189 |

+

"151666": {

|

| 190 |

+

"content": "</tool_response>",

|

| 191 |

+

"lstrip": false,

|

| 192 |

+

"normalized": false,

|

| 193 |

+

"rstrip": false,

|

| 194 |

+

"single_word": false,

|

| 195 |

+

"special": false

|

| 196 |

+

},

|

| 197 |

+

"151667": {

|

| 198 |

+

"content": "<think>",

|

| 199 |

+

"lstrip": false,

|

| 200 |

+

"normalized": false,

|

| 201 |

+

"rstrip": false,

|

| 202 |

+

"single_word": false,

|

| 203 |

+

"special": false

|

| 204 |

+

},

|

| 205 |

+

"151668": {

|

| 206 |

+

"content": "</think>",

|

| 207 |

+

"lstrip": false,

|

| 208 |

+

"normalized": false,

|

| 209 |

+

"rstrip": false,

|

| 210 |

+

"single_word": false,

|

| 211 |

+

"special": false

|

| 212 |

+

}

|

| 213 |

+

},

|

| 214 |

+

"additional_special_tokens": [

|

| 215 |

+

"<|im_start|>",

|

| 216 |

+

"<|im_end|>",

|

| 217 |

+

"<|object_ref_start|>",

|

| 218 |

+

"<|object_ref_end|>",

|

| 219 |

+

"<|box_start|>",

|

| 220 |

+

"<|box_end|>",

|

| 221 |

+

"<|quad_start|>",

|

| 222 |

+

"<|quad_end|>",

|

| 223 |

+

"<|vision_start|>",

|

| 224 |

+

"<|vision_end|>",

|

| 225 |

+

"<|vision_pad|>",

|

| 226 |

+

"<|image_pad|>",

|

| 227 |

+

"<|video_pad|>"

|

| 228 |

+

],

|

| 229 |

+

"bos_token": null,

|

| 230 |

+

"clean_up_tokenization_spaces": false,

|

| 231 |

+

"eos_token": "<|im_end|>",

|

| 232 |

+

"errors": "replace",

|

| 233 |

+

"extra_special_tokens": {},

|

| 234 |

+

"model_max_length": 262144,

|

| 235 |

+

"pad_token": "<|vision_pad|>",

|

| 236 |

+

"padding_side": "left",

|

| 237 |

+

"split_special_tokens": false,

|

| 238 |

+

"tokenizer_class": "Qwen2Tokenizer",

|

| 239 |

+

"unk_token": null,

|

| 240 |

+