CavaFace: Optimized for Mobile Deployment

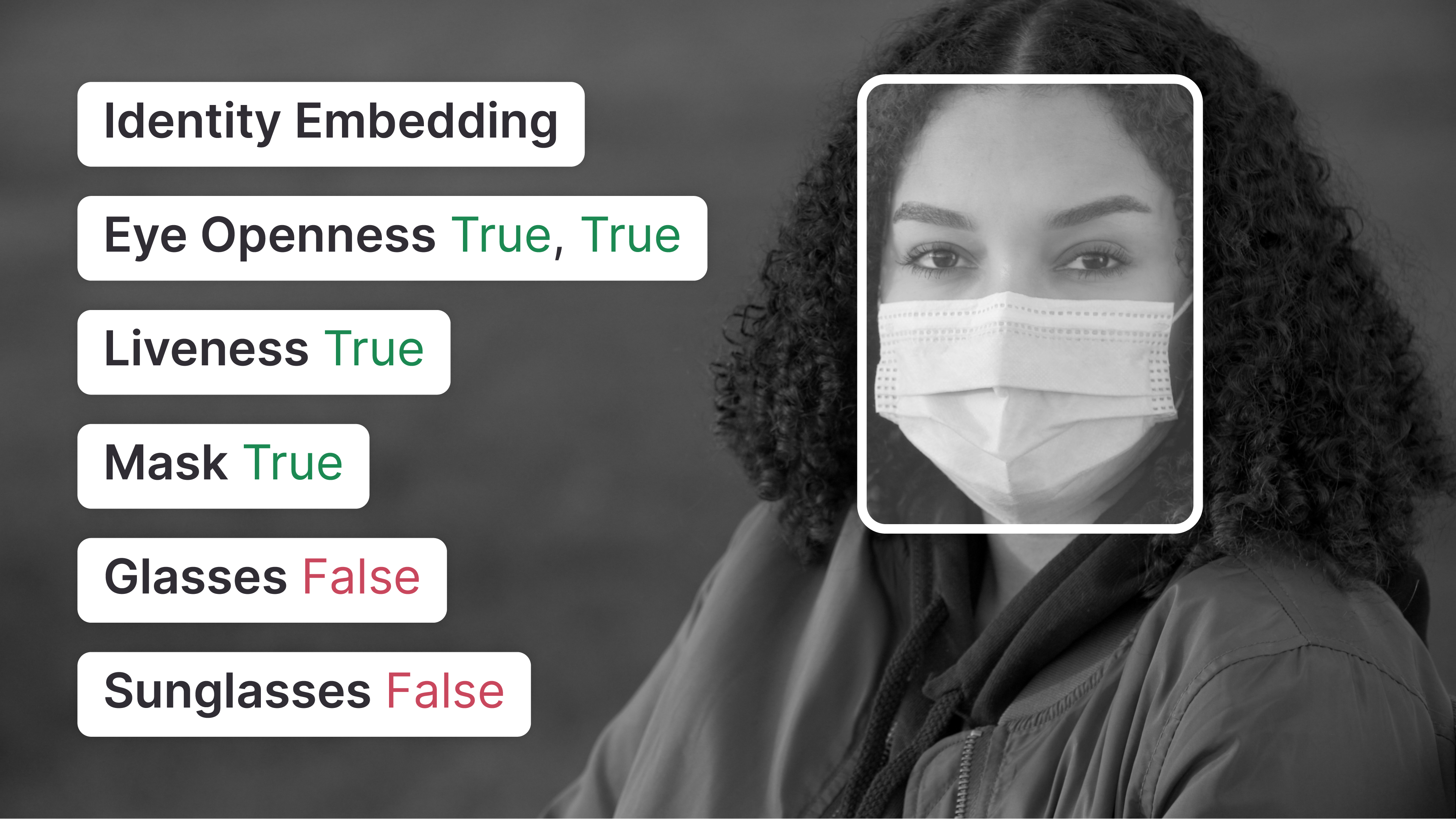

Comprehensive facial analysis by extracting face features

A PyTorch-based framework for training face recognition models that generates facial embeddings for verification and identification tasks

This model is an implementation of CavaFace found here.

This repository provides scripts to run CavaFace on Qualcomm® devices. More details on model performance across various devices, can be found here.

Model Details

- Model Type: Model_use_case.object_detection

- Model Stats:

- Model checkpoint: IR_SE_100_Combined_Epoch_24.pt

- Input resolution: 112x112

- Number of parameters: 65.5M

- Model size (float): 249.96MB

| Model | Precision | Device | Chipset | Target Runtime | Inference Time (ms) | Peak Memory Range (MB) | Primary Compute Unit | Target Model |

|---|---|---|---|---|---|---|---|---|

| CavaFace | float | QCS8275 (Proxy) | Qualcomm® QCS8275 (Proxy) | TFLITE | 24.767 ms | 0 - 74 MB | NPU | CavaFace.tflite |

| CavaFace | float | QCS8275 (Proxy) | Qualcomm® QCS8275 (Proxy) | QNN_DLC | 24.739 ms | 0 - 58 MB | NPU | CavaFace.dlc |

| CavaFace | float | QCS8450 (Proxy) | Qualcomm® QCS8450 (Proxy) | TFLITE | 7.121 ms | 0 - 179 MB | NPU | CavaFace.tflite |

| CavaFace | float | QCS8450 (Proxy) | Qualcomm® QCS8450 (Proxy) | QNN_DLC | 8.871 ms | 0 - 69 MB | NPU | CavaFace.dlc |

| CavaFace | float | QCS8550 (Proxy) | Qualcomm® QCS8550 (Proxy) | TFLITE | 4.401 ms | 0 - 414 MB | NPU | CavaFace.tflite |

| CavaFace | float | QCS8550 (Proxy) | Qualcomm® QCS8550 (Proxy) | QNN_DLC | 4.311 ms | 0 - 65 MB | NPU | CavaFace.dlc |

| CavaFace | float | QCS8550 (Proxy) | Qualcomm® QCS8550 (Proxy) | ONNX | 4.637 ms | 0 - 12 MB | NPU | CavaFace.onnx.zip |

| CavaFace | float | QCS9075 (Proxy) | Qualcomm® QCS9075 (Proxy) | TFLITE | 7.086 ms | 0 - 74 MB | NPU | CavaFace.tflite |

| CavaFace | float | QCS9075 (Proxy) | Qualcomm® QCS9075 (Proxy) | QNN_DLC | 30.228 ms | 0 - 58 MB | NPU | CavaFace.dlc |

| CavaFace | float | SA7255P ADP | Qualcomm® SA7255P | TFLITE | 24.767 ms | 0 - 74 MB | NPU | CavaFace.tflite |

| CavaFace | float | SA7255P ADP | Qualcomm® SA7255P | QNN_DLC | 24.739 ms | 0 - 58 MB | NPU | CavaFace.dlc |

| CavaFace | float | SA8255 (Proxy) | Qualcomm® SA8255P (Proxy) | TFLITE | 4.34 ms | 0 - 479 MB | NPU | CavaFace.tflite |

| CavaFace | float | SA8255 (Proxy) | Qualcomm® SA8255P (Proxy) | QNN_DLC | 4.315 ms | 0 - 55 MB | NPU | CavaFace.dlc |

| CavaFace | float | SA8295P ADP | Qualcomm® SA8295P | TFLITE | 8.217 ms | 0 - 75 MB | NPU | CavaFace.tflite |

| CavaFace | float | SA8295P ADP | Qualcomm® SA8295P | QNN_DLC | 7.973 ms | 0 - 62 MB | NPU | CavaFace.dlc |

| CavaFace | float | SA8650 (Proxy) | Qualcomm® SA8650P (Proxy) | TFLITE | 4.349 ms | 0 - 420 MB | NPU | CavaFace.tflite |

| CavaFace | float | SA8650 (Proxy) | Qualcomm® SA8650P (Proxy) | QNN_DLC | 4.318 ms | 0 - 73 MB | NPU | CavaFace.dlc |

| CavaFace | float | SA8775P ADP | Qualcomm® SA8775P | TFLITE | 7.086 ms | 0 - 74 MB | NPU | CavaFace.tflite |

| CavaFace | float | SA8775P ADP | Qualcomm® SA8775P | QNN_DLC | 30.228 ms | 0 - 58 MB | NPU | CavaFace.dlc |

| CavaFace | float | Samsung Galaxy S24 | Snapdragon® 8 Gen 3 Mobile | TFLITE | 3.228 ms | 0 - 174 MB | NPU | CavaFace.tflite |

| CavaFace | float | Samsung Galaxy S24 | Snapdragon® 8 Gen 3 Mobile | QNN_DLC | 3.206 ms | 0 - 67 MB | NPU | CavaFace.dlc |

| CavaFace | float | Samsung Galaxy S24 | Snapdragon® 8 Gen 3 Mobile | ONNX | 3.377 ms | 0 - 66 MB | NPU | CavaFace.onnx.zip |

| CavaFace | float | Samsung Galaxy S25 | Snapdragon® 8 Elite For Galaxy Mobile | TFLITE | 2.668 ms | 0 - 79 MB | NPU | CavaFace.tflite |

| CavaFace | float | Samsung Galaxy S25 | Snapdragon® 8 Elite For Galaxy Mobile | QNN_DLC | 2.629 ms | 0 - 65 MB | NPU | CavaFace.dlc |

| CavaFace | float | Samsung Galaxy S25 | Snapdragon® 8 Elite For Galaxy Mobile | ONNX | 2.792 ms | 0 - 63 MB | NPU | CavaFace.onnx.zip |

| CavaFace | float | Snapdragon 8 Elite Gen 5 QRD | Snapdragon® 8 Elite Gen5 Mobile | TFLITE | 2.285 ms | 0 - 80 MB | NPU | CavaFace.tflite |

| CavaFace | float | Snapdragon 8 Elite Gen 5 QRD | Snapdragon® 8 Elite Gen5 Mobile | QNN_DLC | 2.254 ms | 0 - 64 MB | NPU | CavaFace.dlc |

| CavaFace | float | Snapdragon 8 Elite Gen 5 QRD | Snapdragon® 8 Elite Gen5 Mobile | ONNX | 2.446 ms | 0 - 63 MB | NPU | CavaFace.onnx.zip |

| CavaFace | float | Snapdragon X Elite CRD | Snapdragon® X Elite | QNN_DLC | 4.473 ms | 418 - 418 MB | NPU | CavaFace.dlc |

| CavaFace | float | Snapdragon X Elite CRD | Snapdragon® X Elite | ONNX | 4.487 ms | 127 - 127 MB | NPU | CavaFace.onnx.zip |

Installation

Install the package via pip:

# NOTE: 3.10 <= PYTHON_VERSION < 3.14 is supported.

pip install "qai-hub-models[cavaface]"

Configure Qualcomm® AI Hub Workbench to run this model on a cloud-hosted device

Sign-in to Qualcomm® AI Hub Workbench with your

Qualcomm® ID. Once signed in navigate to Account -> Settings -> API Token.

With this API token, you can configure your client to run models on the cloud hosted devices.

qai-hub configure --api_token API_TOKEN

Navigate to docs for more information.

Demo off target

The package contains a simple end-to-end demo that downloads pre-trained weights and runs this model on a sample input.

python -m qai_hub_models.models.cavaface.demo

The above demo runs a reference implementation of pre-processing, model inference, and post processing.

NOTE: If you want running in a Jupyter Notebook or Google Colab like environment, please add the following to your cell (instead of the above).

%run -m qai_hub_models.models.cavaface.demo

Run model on a cloud-hosted device

In addition to the demo, you can also run the model on a cloud-hosted Qualcomm® device. This script does the following:

- Performance check on-device on a cloud-hosted device

- Downloads compiled assets that can be deployed on-device for Android.

- Accuracy check between PyTorch and on-device outputs.

python -m qai_hub_models.models.cavaface.export

How does this work?

This export script leverages Qualcomm® AI Hub to optimize, validate, and deploy this model on-device. Lets go through each step below in detail:

Step 1: Compile model for on-device deployment

To compile a PyTorch model for on-device deployment, we first trace the model

in memory using the jit.trace and then call the submit_compile_job API.

import torch

import qai_hub as hub

from qai_hub_models.models.cavaface import Model

# Load the model

torch_model = Model.from_pretrained()

# Device

device = hub.Device("Samsung Galaxy S25")

# Trace model

input_shape = torch_model.get_input_spec()

sample_inputs = torch_model.sample_inputs()

pt_model = torch.jit.trace(torch_model, [torch.tensor(data[0]) for _, data in sample_inputs.items()])

# Compile model on a specific device

compile_job = hub.submit_compile_job(

model=pt_model,

device=device,

input_specs=torch_model.get_input_spec(),

)

# Get target model to run on-device

target_model = compile_job.get_target_model()

Step 2: Performance profiling on cloud-hosted device

After compiling models from step 1. Models can be profiled model on-device using the

target_model. Note that this scripts runs the model on a device automatically

provisioned in the cloud. Once the job is submitted, you can navigate to a

provided job URL to view a variety of on-device performance metrics.

profile_job = hub.submit_profile_job(

model=target_model,

device=device,

)

Step 3: Verify on-device accuracy

To verify the accuracy of the model on-device, you can run on-device inference on sample input data on the same cloud hosted device.

input_data = torch_model.sample_inputs()

inference_job = hub.submit_inference_job(

model=target_model,

device=device,

inputs=input_data,

)

on_device_output = inference_job.download_output_data()

With the output of the model, you can compute like PSNR, relative errors or spot check the output with expected output.

Note: This on-device profiling and inference requires access to Qualcomm® AI Hub Workbench. Sign up for access.

Deploying compiled model to Android

The models can be deployed using multiple runtimes:

TensorFlow Lite (

.tfliteexport): This tutorial provides a guide to deploy the .tflite model in an Android application.QNN (

.soexport ): This sample app provides instructions on how to use the.soshared library in an Android application.

View on Qualcomm® AI Hub

Get more details on CavaFace's performance across various devices here. Explore all available models on Qualcomm® AI Hub

License

- The license for the original implementation of CavaFace can be found here.

- The license for the compiled assets for on-device deployment can be found here

References

Community

- Join our AI Hub Slack community to collaborate, post questions and learn more about on-device AI.

- For questions or feedback please reach out to us.

- Downloads last month

- 14